新博客:

完整版 - AVFoundation Programming Guide分章节版:

– 第 1 章:About AVFoundation - AVFoundation 概述

– 第 2 章:Using Assets - 使用 Assets

– 第 3 章:Playback - 播放

– 第 4 章:Editing - 编辑

– 第 5 章:Still and Video Media Capture - 静态视频媒体捕获

– 第 6 章:Export - 输出

– 第 7 章:Time and Media Representations 时间和媒体表现版权声明:本文为博主原创翻译,如需转载请注明出处。

苹果源文档地址 - 点击这里

About AVFoundation - AVFoundation 概述

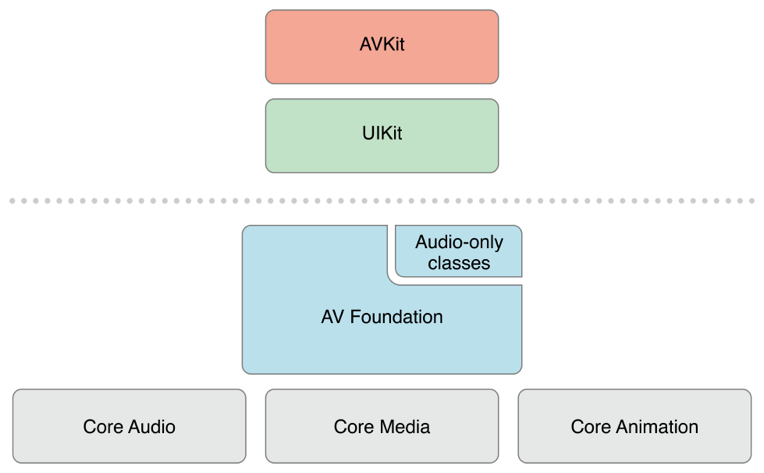

AVFoundation is one of several frameworks that you can use to play and create time-based audiovisual media. It provides an Objective-C interface you use to work on a detailed level with time-based audiovisual data. For example, you can use it to examine, create, edit, or reencode media files. You can also get input streams from devices and manipulate video during realtime capture and playback. Figure I-1 shows the architecture on iOS.

AVFoundation 是可以用它来播放和创建基于时间的视听媒体的几个框架之一。它提供了基于时间的视听数据的详细级别上的 Objective-C 接口。例如,你可以用它来检查,创建,编辑或重新编码媒体文件。您也可以从设备得到输入流和在实时捕捉回放过程中操控视频。图 I-1 显示了 iOS 上的架构。

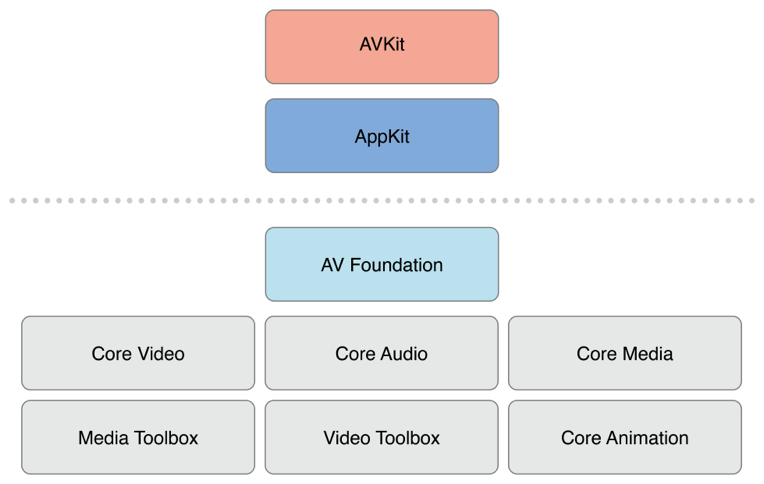

Figure I-2 shows the corresponding media architecture on OS X.

图 1-2 显示了OS X上相关媒体的架构:

You should typically use the highest-level abstraction available that allows you to perform the tasks you want.

-

If you simply want to play movies, use the AVKit framework.

-

On iOS, to record video when you need only minimal control over format, use the UIKit framework(UIImagePickerController)

Note, however, that some of the primitive data structures that you use in AV Foundation—including time-related data structures and opaque objects to carry and describe media data—are declared in the Core Media framework.

通常,您应该使用可用的最高级别的抽象接口,执行所需的任务。

-

如果你只是想播放电影,使用

AVKit框架。 -

在 iOS 上,当你在格式上只需要最少的控制,使用 UIKit 框架录制视频。(UIImagePickerController).

但是请注意,某些在AV Foundation 中使用的原始数据结构,包括时间相关的数据结构和不透明数据对象的传递和描述媒体数据是在Core Media framework声明的。

At a Glance - 摘要

There are two facets to the AVFoundation framework—APIs related to video and APIs related just to audio. The older audio-related classes provide easy ways to deal with audio. They are described in the Multimedia Programming Guide, not in this document.

-

To play sound files, you can use AVAudioPlayer.

-

To record audio, you can use AVAudioRecorder.

You can also configure the audio behavior of your application using AVAudioSession; this is described in Audio Session Programming Guide.

AVFoundation 框架包含视频相关的 APIs 和音频相关的 APIs。旧的音频相关类提供了简便的方法来处理音频。他们在 Multimedia Programming Guide, 中介绍,不在这个文档中。

-

要播放声音文件,您可以使用 AVAudioPlayer。

-

要录制音频,您可以使用 AVAudioRecorder。

您还可以使用 AVAudioSession 来配置应用程序的音频行为; 这是在 Audio Session Programming Guide 文档中介绍的。

Representing and Using Media with AVFoundation - 用 AVFoundation 表示和使用媒体

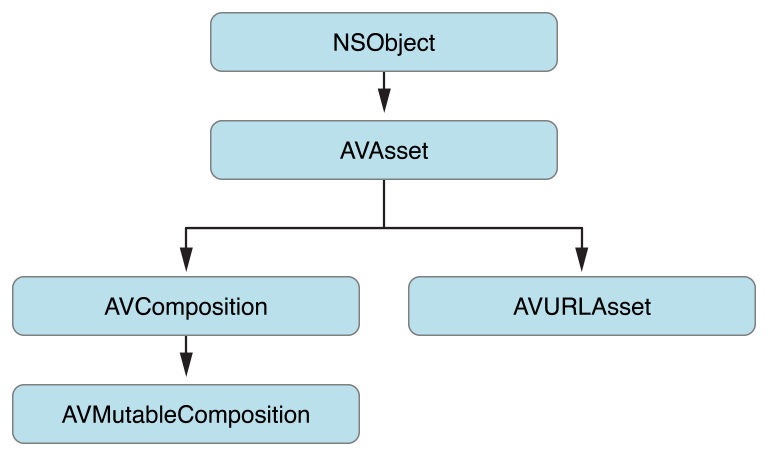

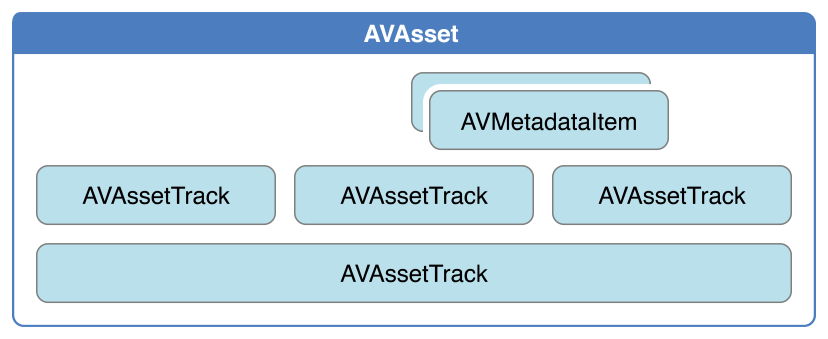

The primary class that the AV Foundation framework uses to represent media is AVAsset. The design of the framework is largely guided by this representation. Understanding its structure will help you to understand how the framework works. An AVAssetinstance is an aggregated representation of a collection of one or more pieces of media data (audio and video tracks). It provides information about the collection as a whole, such as its title, duration, natural presentation size, and so on. AVAsset is not tied to particular data format. AVAsset is the superclass of other classes used to create asset instances from media at a URL (see Using Assets) and to create new compositions (see Editing).

AV Foundation框架用来表示媒体的主要类是 AVAsset。框架的设计主要是由这种表示引导。了解它的结构将有助于您了解该框架是如何工作的。一个 AVAsset 实例的媒体数据的一个或更多个(音频和视频轨道)的集合的聚集表示。它规定将有关集合的信息作为一个整体,如它的名称,时间,自然呈现大小等的信息。 AVAsset 是不依赖于特定的数据格式。 AVAsset是常常从 URL 中的媒体创建资产实例的这种类父类(请参阅 Using Assets),并创造新的成分(见 Editing)。

Each of the individual pieces of media data in the asset is of a uniform type and called a track. In a typical simple case, one track represents the audio component, and another represents the video component; in a complex composition, however, there may be multiple overlapping tracks of audio and video. Assets may also have metadata.

Asset中媒体数据的各个部分,每一个都是一个统一的类型,把这个类型称为 “轨道”。在一个典型简单的情况下,一个轨道代表这个音频组件,另一个代表视频组件。然而复杂的组合中,有可能是多个重叠的音频和视频轨道。Assets 也可能有元数据。

A vital concept in AV Foundation is that initializing an asset or a track does not necessarily mean that it is ready for use. It may require some time to calculate even the duration of an item (an MP3 file, for example, may not contain summary information). Rather than blocking the current thread while a value is being calculated, you ask for values and get an answer back asynchronously through a callback that you define using a block.

在 AV Foundation 中一个非常重要的概念是:初始化一个 asset 或者一个轨道并不一定意味着它已经准备好可以被使用。这可能需要一些时间来计算一个项目的持续时间(例如一个 MP3 文件,其中可能不包含摘要信息)。而不是当一个值被计算的时候阻塞当前线程,你访问这个值,并且通过调用你定义的一个 block 来得到异步返回。

Relevant Chapters: Using Assets, Time and Media Representations

相关章节:Using Assets, Time and Media Representations

Playback - 播放

AVFoundation allows you to manage the playback of asset in sophisticated ways. To support this, it separates the presentation state of an asset from the asset itself. This allows you to, for example, play two different segments of the same asset at the same time rendered at different resolutions. The presentation state for an asset is managed by a player item object; the presentation state for each track within an asset is managed by a player item track object. Using the player item and player item tracks you can, for example, set the size at which the visual portion of the item is presented by the player, set the audio mix parameters and video composition settings to be applied during playback, or disable components of the asset during playback.

AVFoundation允许你用一种复杂的方式来管理asset的播放。为了支持这一点,它将一个asset的呈现状态从asset自身中分离出来。例如允许你在不同的分辨率下同时播放同一个asset中的两个不同的片段。一个asset的呈现状态是由player item对象管理的。Asset中的每个轨道的呈现状态是由player item track对象管理的。例如使用player item和player item tracks,你可以设置被播放器呈现的项目中可视的那一部分,设置音频的混合参数以及被应用于播放期间的视频组合设定,或者播放期间的禁用组件。

You play player items using a player object, and direct the output of a player to the Core Animation layer. You can use a player queue to schedule playback of a collection of player items in sequence.

你可以使用一个 player 对象来播放播放器项目,并且直接输出一个播放器给核心动画层。你可以使用一个 player queue(player 对象的队列)去给队列中player items集合中的播放项目安排序列。

Relevant Chapter: Playback

相关章节:Playback

Reading, Writing, and Reencoding Assets - 读取,写入和重新编码 Assets

AVFoundation allows you to create new representations of an asset in several ways. You can simply reencode an existing asset, or—in iOS 4.1 and later—you can perform operations on the contents of an asset and save the result as a new asset.

AVFoundation 允许你用几种方式创建新的 asset 的表现形式。你可以简单将已经存在的 asset 重新编码,或者在 iOS4.1 以及之后的版本中,你可以在一个 asset 的目录中执行一些操作并且将结果保存为一个新的 asset 。

You use an export session to reencode an existing asset into a format defined by one of a small number of commonly-used presets. If you need more control over the transformation, in iOS 4.1 and later you can use an asset reader and asset writer object in tandem to convert an asset from one representation to another. Using these objects you can, for example, choose which of the tracks you want to be represented in the output file, specify your own output format, or modify the asset during the conversion process.

你可以使用 export session 将一个现有的asset重新编码为一个小数字,这个小数字是常用的预先设定好的一些小数字中的一个。如果在转换中你需要更多的控制,在 iOS4.1 已经以后的版本中,你可以使用 asset reader 和 asset writer 对象串联的一个一个的转换。例如你可以使用这些对象选择在输出的文件中想要表示的轨道,指定你自己的输出格式,或者在转换过程中修改这个asset。

To produce a visual representation of the waveform, you use an asset reader to read the audio track of an asset.

为了产生波形的可视化表示,你可以使用asset reader去读取asset中的音频轨道。

Relevant Chapter: Using Assets

相关章节:Using Assets

Thumbnails - 缩略图

To create thumbnail images of video presentations, you initialize an instance of AVAssetImageGenerator using the asset from which you want to generate thumbnails. AVAssetImageGenerator uses the default enabled video tracks to generate images.

创建视频演示图像的缩略图,使用想要生成缩略图的asset初始化一个 AVAssetImageGenerator 的实例。AVAssetImageGenerator 使用默认启用视频轨道来生成图像。

Relevant Chapter: Using Assets

相关章节:Using Assets

Editing - 编辑

AVFoundation uses compositions to create new assets from existing pieces of media (typically, one or more video and audio tracks). You use a mutable composition to add and remove tracks, and adjust their temporal orderings. You can also set the relative volumes and ramping of audio tracks; and set the opacity, and opacity ramps, of video tracks. A composition is an assemblage of pieces of media held in memory. When you export a composition using an export session, it’s collapsed to a file.

AVFoundation 使用 compositions 去从现有的媒体片段(通常是一个或多个视频和音频轨道)创建新的 assets 。你可以使用一个可变成分去添加和删除轨道,并调整它们的时间排序。你也可以设置相对音量和增加音频轨道;并且设置不透明度,浑浊坡道,视频跟踪。一种组合物,是一种在内存中存储的介质的组合。当年你使用 export session 导出一个成份,它会坍塌到一个文件中。

You can also create an asset from media such as sample buffers or still images using an asset writer.

你也可以从媒体上创建一个asset,比如使用asset writer. 的示例缓冲区或静态图像。

Relevant Chapter: Editing

相关章节:Editing

Still and Video Media Capture - 静态和视频媒体捕获

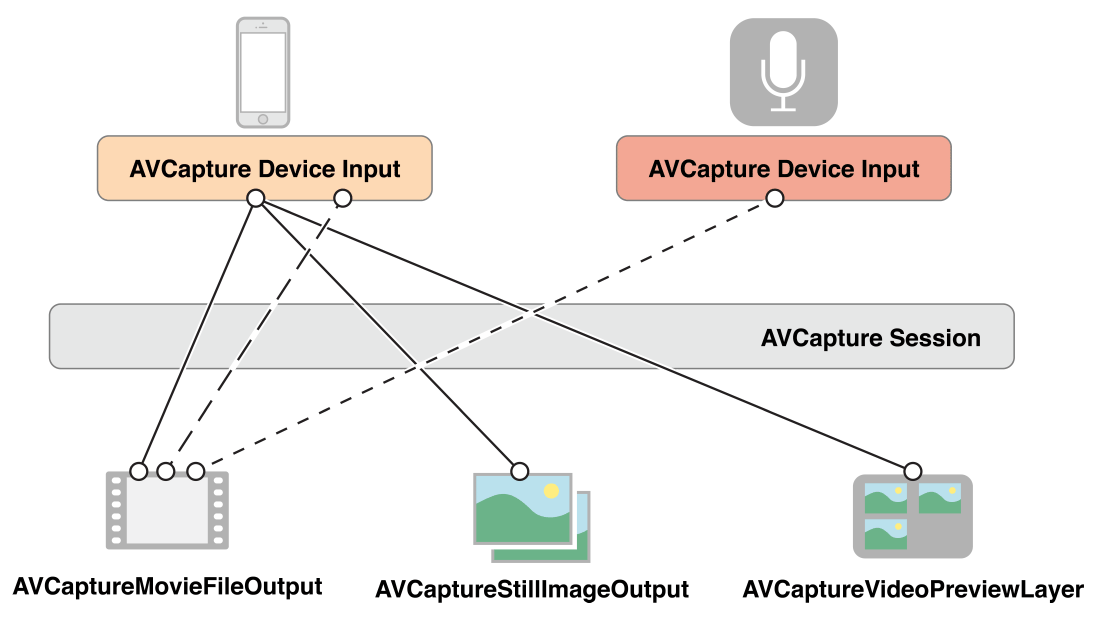

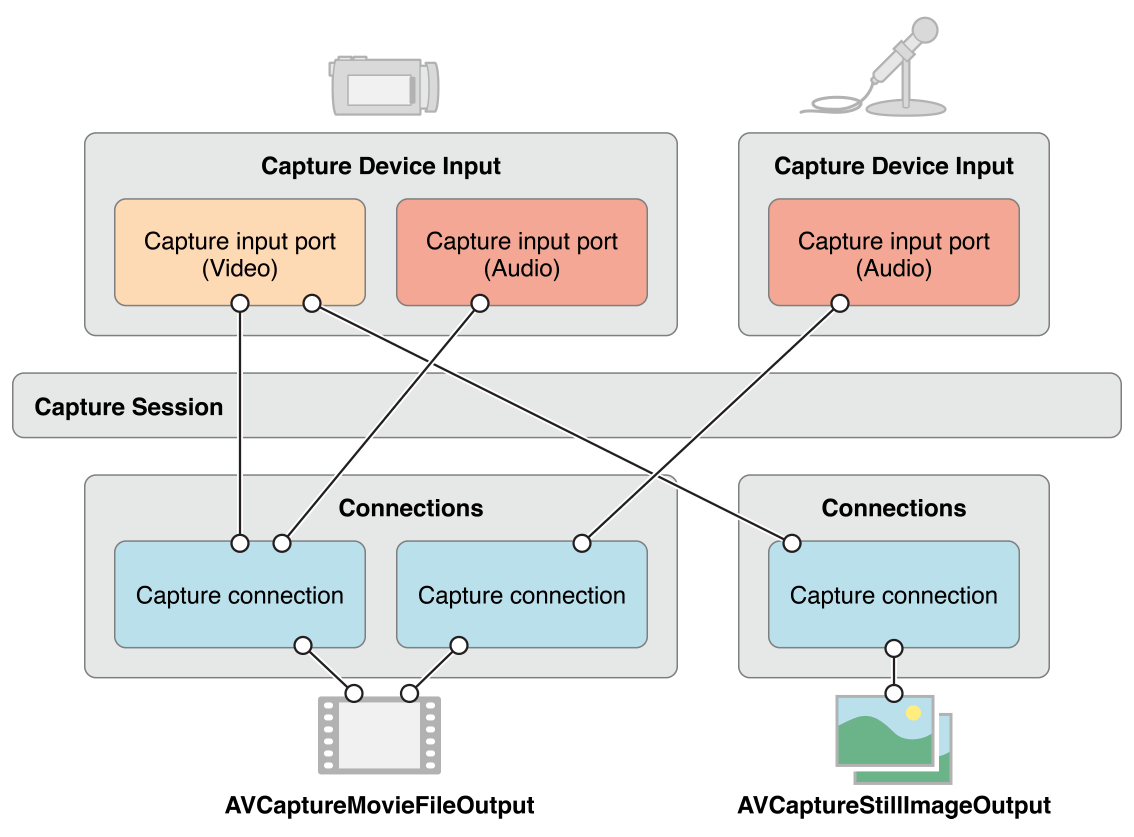

Recording input from cameras and microphones is managed by a capture session. A capture session coordinates the flow of data from input devices to outputs such as a movie file. You can configure multiple inputs and outputs for a single session, even when the session is running. You send messages to the session to start and stop data flow.

从相机和麦克风记录输入是由一个 capture session 管理的。一个 capture session 协调从输入设备到输出的数据流,比如一个电影文件。你可以为一个单一的 session 配置多个输入和输出,甚至 session 正在运行的时候也可以。你将消息发送到 session 去启动和停止数据流。

In addition, you can use an instance of a preview layer to show the user what a camera is recording.

此外,你可以使用 preview layer 的一个实例来向用户显示一个相机是正在录制的。

Relevant Chapter: Still and Video Media Capture

相关章节:Still and Video Media Capture

Concurrent Programming with AVFoundation - AVFoundation 并发编程

Callbacks from AVFoundation—invocations of blocks, key-value observers, and notification handlers—are not guaranteed to be made on any particular thread or queue. Instead, AVFoundation invokes these handlers on threads or queues on which it performs its internal tasks.

从 AVFoundation 回调,比如块的调用、键值观察者以及通知处理程序,都不能保证在任何特定的线程或队列进行。相反,AVFoundation 在线程或者执行其内部任务的队列上调用这些处理程序。

There are two general guidelines as far as notifications and threading:

- UI related notifications occur on the main thread.

- Classes or methods that require you create and/or specify a queue will return notifications on that queue.

Beyond those two guidelines (and there are exceptions, which are noted in the reference documentation) you should not assume that a notification will be returned on any specific thread.

下面是两个有关通知和线程的准则

- 在主线程上发生的与用户界面相关的通知。

- 需要创建并且 / 或者 指定一个队列的类或者方法将返回该队列的通知。

除了这两个准则(当然是有一些例外,在参考文档中会被指出),你不应该假设一个通知将在任何特定的线程返回。

If you’re writing a multithreaded application, you can use the NSThread method isMainThread or [[NSThread currentThread] isEqual:<#A stored thread reference#>] to test whether the invocation thread is a thread you expect to perform your work on. You can redirect messages to appropriate threads using methods such as performSelectorOnMainThread:withObject:waitUntilDone: and performSelector:onThread:withObject:waitUntilDone:modes:. You could also use dispatch_async to “bounce” to your blocks on an appropriate queue, either the main queue for UI tasks or a queue you have up for concurrent operations. For more about concurrent operations, see Concurrency Programming Guide; for more about blocks, see Blocks Programming Topics. The AVCam-iOS: Using AVFoundation to Capture Images and Movies sample code is considered the primary example for all AVFoundation functionality and can be consulted for examples of thread and queue usage with AVFoundation.

如果你在写一个多线程的应用程序,你可以使用 NSThread 方法 isMainThread 或者 [[NSThread currentThread] isEqual:<#A stored thread reference#>] 去测试是否调用了你期望执行你任务的线程。你可以使用方法重定向 消息给适合的线程,比如 performSelectorOnMainThread:withObject:waitUntilDone: 以及 performSelector:onThread:withObject:waitUntilDone:modes:. 你也可以使用 dispatch_async 弹回到适当队列的 blocks 中,无论是在主界面的任务队列还是有了并发操作的队列。更多关于并行操作,请查看 Concurrency Programming Guide;更多关于块,请查看 Blocks Programming Topics. AVCam-iOS: Using AVFoundation to Capture Images and Movies 示例代码是所有 AVFoundation 功能最主要的例子,可以对线程和队列使用 AVFoundation 实例参考。

Prerequisites - 预备知识

AVFoundation is an advanced Cocoa framework. To use it effectively, you must have:

- A solid understanding of fundamental Cocoa development tools and techniques

- A basic grasp of blocks

- A basic understanding of key-value coding and key-value observing

- For playback, a basic understanding of Core Animation (see Core Animation Programming Guide or, for basic playback, the AVKit Framework Reference.

AVFoundation 是一种先进的 Cocoa 框架,为了有效的使用,你必须掌握下面的知识:

- 扎实的了解基本的

Cocoa开发工具和框架 - 对块有基本的了解

- 了解基本的键值编码 (

key-value coding) 和键值观察(key-value observing) - 对于播放,对核心动画的基本理解 (see Core Animation Programming Guide ) 或者, 对于基本播放, 请看 AVKit Framework Reference.

See Also - 参考

There are several AVFoundation examples including two that are key to understanding and implementation Camera capture functionality:

- AVCam-iOS: Using AVFoundation to Capture Images and Movies is the canonical sample code for implementing any program that uses the camera functionality. It is a complete sample, well documented, and covers the majority of the functionality showing the best practices.

- AVCamManual: Extending AVCam to Use Manual Capture API is the companion application to AVCam. It implements Camera functionality using the manual camera controls. It is also a complete example, well documented, and should be considered the canonical example for creating camera applications that take advantage of manual controls.

- RosyWriter is an example that demonstrates real time frame processing and in particular how to apply filters to video content. This is a very common developer requirement and this example covers that functionality.

- AVLocationPlayer: Using AVFoundation Metadata Reading APIs demonstrates using the metadata APIs.

有几个 AVFoundation 的例子,包括两个理解和实现摄像头捕捉功能的关键点:

- AVCam-iOS: Using AVFoundation to Capture Images and Movies 是实现任何想使用摄像头功能的程序的典型示例代码。它是一个完整的样本,以及记录,并涵盖了大部分主要的功能。

- AVCamManual: Extending AVCam to Use Manual Capture API 是 AVCam 相对应的应用程序。它使用手动相机控制实现相机功能。它也是一个完成的例子,以及记录,并且应该被视为利用手动控制创建相机应用程序的典型例子。

- RosyWriter 是一个演示实时帧处理的例子,特别是如果过滤器应用到视频内容。这是一个非常普遍的开发人员的需求,这个例子涵盖了这个功能。

AVLocationPlayer: 使用AVFoundation Metadata Reading APIs演示使用the metadata APIs.

Using Assets - 使用 Assets

Assets can come from a file or from media in the user’s iPod library or Photo library. When you create an asset object all the information that you might want to retrieve for that item is not immediately available. Once you have a movie asset, you can extract still images from it, transcode it to another format, or trim the contents.

Assets 可以来自文件或者媒体用户的 iPod 库、图片库。当你创建一个 asset 对象时,所有你可能想要检索该项目的信息不是立即可用的。一旦你有了一个电影 asset ,你可以从里面提取静态图像,转换到另一个格式,或者对内容就行修剪。

Creating an Asset Object - 创建一个 Asset 对象

To create an asset to represent any resource that you can identify using a URL, you use AVURLAsset. The simplest case is creating an asset from a file:

为了创建一个 asset ,去代表任何你能用一个 URL 识别的资源,你可以使用 AVURLAsset . 最简单的情况是从一个文件创建一个 asset 。

NSURL *url = <#A URL that identifies an audiovisual asset such as a movie file#>;

AVURLAsset *anAsset = [[AVURLAsset alloc] initWithURL:url options:nil];Options for Initializing an Asset - 初始化一个 Asset 的选择

The AVURLAsset initialization methods take as their second argument an options dictionary. The only key used in the dictionary is AVURLAssetPreferPreciseDurationAndTimingKey. The corresponding value is a Boolean (contained in an NSValue object) that indicates whether the asset should be prepared to indicate a precise duration and provide precise random access by time.

AVURLAsset 初始化方法作为它们的第二个参数选项字典。本字典中唯一被使用的 key 是 AVURLAssetPreferPreciseDurationAndTimingKey. 相应的值是一个布尔值(包含在一个 NSValue 对象中),这个布尔值指出是否该 asset 应该准备标出一个精确的时间和提供一个以时间为种子的随机存取。

Getting the exact duration of an asset may require significant processing overhead. Using an approximate duration is typically a cheaper operation and sufficient for playback. Thus:

- If you only intend to play the asset, either pass nil instead of a dictionary, or pass a dictionary that contains the AVURLAssetPreferPreciseDurationAndTimingKey key and a corresponding value of NO (contained in an NSValue object).

- If you want to add the asset to a composition (AVMutableComposition), you typically need precise random access. Pass a dictionary that contains theAVURLAssetPreferPreciseDurationAndTimingKey key and a corresponding value of YES (contained in an NSValue object—recall that NSNumberinherits from NSValue):

获得一个 asset 的确切持续时间可能需要大量的处理开销。使用一个近似的持续时间通常是一个更便宜的操作并且对于播放已经足够了。因此:

- 如果你只打算播放这个

asset, 要么传递一个nil代替dictionary,或者传递一个字典,这个字典包含AVURLAssetPreferPreciseDurationAndTimingKey的key和相应NO(包含在一个NSValue对象) 的值。 - 如果你想要把

asset添加给一个composition(AVMutableComposition), 通常你需要精确的随机存取。传递一个字典(这个字典包含AVURLAssetPreferPreciseDurationAndTimingKeykey) 和一个相应的 YES 的值(YES 包含在一个NSValue对象中,回忆一下继承自NSValue的 NSNmuber)

NSURL *url = <#A URL that identifies an audiovisual asset such as a movie file#>;

NSDictionary *options = @{ AVURLAssetPreferPreciseDurationAndTimingKey : @YES };

AVURLAsset *anAssetToUseInAComposition = [[AVURLAsset alloc] initWithURL:url options:options];Accessing the User’s Assets - 访问用户的Assets

To access the assets managed by the iPod library or by the Photos application, you need to get a URL of the asset you want.

- To access the iPod Library, you create an MPMediaQuery instance to find the item you want, then get its URL using MPMediaItemPropertyAssetURL.For more about the Media Library, see Multimedia Programming Guide.

- To access the assets managed by the Photos application, you use ALAssetsLibrary.

The following example shows how you can get an asset to represent the first video in the Saved Photos Album.

为了访问由 iPod 库或者照片应用程序管理的 assets ,你需要得到你想要 asset 的一个 URL。

- 为了访问 iPod 库,创建一个 MPMediaQuery 实例去找到你想要的项目,然后使用 MPMediaItemPropertyAssetURL 得到它的

URL, - 为了访问有照片应用程序管理的

assets,可以使用 ALAssetsLibrary。

下面的例子展示了如何获得一个 asset 来保存照片相册中的第一个视频。

ALAssetsLibrary *library = [[ALAssetsLibrary alloc] init];

// Enumerate just the photos and videos group by using ALAssetsGroupSavedPhotos.

[library enumerateGroupsWithTypes:ALAssetsGroupSavedPhotos usingBlock:^(ALAssetsGroup *group, BOOL *stop) {

// Within the group enumeration block, filter to enumerate just videos.

[group setAssetsFilter:[ALAssetsFilter allVideos]];

// For this example, we're only interested in the first item.

[group enumerateAssetsAtIndexes:[NSIndexSet indexSetWithIndex:0]

options:0

usingBlock:^(ALAsset *alAsset, NSUInteger index, BOOL *innerStop) {

// The end of the enumeration is signaled by asset == nil.

if (alAsset) {

ALAssetRepresentation *representation = [alAsset defaultRepresentation];

NSURL *url = [representation url];

AVAsset *avAsset = [AVURLAsset URLAssetWithURL:url options:nil];

// Do something interesting with the AV asset.

}

}];

}

failureBlock: ^(NSError *error) {

// Typically you should handle an error more gracefully than this.

NSLog(@"No groups");

}];Preparing an Asset for Use - 将 Asset 准备好使用

Initializing an asset (or track) does not necessarily mean that all the information that you might want to retrieve for that item is immediately available. It may require some time to calculate even the duration of an item (an MP3 file, for example, may not contain summary information). Rather than blocking the current thread while a value is being calculated, you should use the AVAsynchronousKeyValueLoading protocol to ask for values and get an answer back later through a completion handler you define using a block. (AVAsset and AVAssetTrack conform to the AVAsynchronousKeyValueLoading protocol.)

初始化一个 asset (或者轨道)并不意味着你可能想要检索该项的所有信息是立即可用的。这可能需要一些时间来计算一个项目的持续时间(例如一个 MP3 文件可能不包含摘要信息)。当一个值被计算的时候不应该阻塞当前线程,你应该使用 AVAsynchronousKeyValueLoading 协议去请求值,通过完成处理你定义使用的一个 block 后得到答复。(AVAsset and AVAssetTrack 遵循 AVAsynchronousKeyValueLoading 协议.)

You test whether a value is loaded for a property using statusOfValueForKey:error:. When an asset is first loaded, the value of most or all of its properties is AVKeyValueStatusUnknown. To load a value for one or more properties, you invoke loadValuesAsynchronouslyForKeys:completionHandler:. In the completion handler, you take whatever action is appropriate depending on the property’s status. You should always be prepared for loading to not complete successfully, either because it failed for some reason such as a network-based URL being inaccessible, or because the load was canceled.

测试一个值是否是使用 statusOfValueForKey:error: 加载为一个属性。当 asset 被首次加载时,大部分的或全部属性值是 AVKeyValueStatusUnknown。为一个或多个属性加载一个值,调用 loadValuesAsynchronouslyForKeys:completionHandler:。在完成处理程序中,你采取的行动是否恰当,取决于属性的状态。你应该总是准备加载不会完全成功,它可能有一些原因,比如基于网络的 URL是无法访问的,或者因为负载被取消。

NSURL *url = <#A URL that identifies an audiovisual asset such as a movie file#>;

AVURLAsset *anAsset = [[AVURLAsset alloc] initWithURL:url options:nil];

NSArray *keys = @[@"duration"];

[asset loadValuesAsynchronouslyForKeys:keys completionHandler:^() {

NSError *error = nil;

AVKeyValueStatus tracksStatus = [asset statusOfValueForKey:@"duration" error:&error];

switch (tracksStatus) {

case AVKeyValueStatusLoaded:

[self updateUserInterfaceForDuration];

break;

case AVKeyValueStatusFailed:

[self reportError:error forAsset:asset];

break;

case AVKeyValueStatusCancelled:

// Do whatever is appropriate for cancelation.

break;

}

}];If you want to prepare an asset for playback, you should load its tracks property. For more about playing assets, see Playback.

如果你想准备一个 asset 去播放,你应该加载它的轨道属性。更多有关播放 assets,请看 Playback

Getting Still Images From a Video - 从视频中获取静态图像

To get still images such as thumbnails from an asset for playback, you use an AVAssetImageGenerator object. You initialize an image generator with your asset. Initialization may succeed, though, even if the asset possesses no visual tracks at the time of initialization, so if necessary you should test whether the asset has any tracks with the visual characteristic using tracksWithMediaCharacteristic:.

为了从一个准备播放的 asset 中得到静态图像,比如缩略图,可以使用 AVAssetImageGenerator 对象。用你的 asset 初始化一个图像发生器。不过即使 asset 进程在初始化的时候没有视觉跟踪,也可以成功,所以如果有必要,你应该测试一下, asset 是否有轨道有使用 tracksWithMediaCharacteristic 的视觉特征。

AVAsset anAsset = <#Get an asset#>;

if ([[anAsset tracksWithMediaType:AVMediaTypeVideo] count] > 0) {

AVAssetImageGenerator *imageGenerator =

[AVAssetImageGenerator assetImageGeneratorWithAsset:anAsset];

// Implementation continues...

}You can configure several aspects of the image generator, for example, you can specify the maximum dimensions for the images it generates and the aperture mode using maximumSize and apertureMode respectively.You can then generate a single image at a given time, or a series of images. You must ensure that you keep a strong reference to the image generator until it has generated all the images.

你可以配置几个图像发生器的部分,例如,可以指定生成的图像采用最大值,并且光圈的模式分别使用 maximumSize 和 apertureMode 。然后可以在给定的时间生成一个单独的图像,或者一系列图像。你必须确定,在生成所有图像之前,必须对图像生成器保持一个强引用。

Generating a Single Image - 生成一个单独的图像

You use copyCGImageAtTime:actualTime:error: to generate a single image at a specific time. AVFoundation may not be able to produce an image at exactly the time you request, so you can pass as the second argument a pointer to a CMTime that upon return contains the time at which the image was actually generated.

使用 copyCGImageAtTime:actualTime:error: 方法在指定时间生成一个图像。AVFoundation 在你要求的确切时间可能无法产生一个图像,所以你可以将一个指向 CMTime 的指针当做第二个参数穿过去,这个指针返回的时候包含图像被实际生成的时间。

AVAsset *myAsset = <#An asset#>];

AVAssetImageGenerator *imageGenerator = [[AVAssetImageGenerator alloc] initWithAsset:myAsset];

Float64 durationSeconds = CMTimeGetSeconds([myAsset duration]);

CMTime midpoint = CMTimeMakeWithSeconds(durationSeconds/2.0, 600);

NSError *error;

CMTime actualTime;

CGImageRef halfWayImage = [imageGenerator copyCGImageAtTime:midpoint actualTime:&actualTime error:&error];

if (halfWayImage != NULL) {

NSString *actualTimeString = (NSString *)CMTimeCopyDescription(NULL, actualTime);

NSString *requestedTimeString = (NSString *)CMTimeCopyDescription(NULL, midpoint);

NSLog(@"Got halfWayImage: Asked for %@, got %@", requestedTimeString, actualTimeString);

// Do something interesting with the image.

CGImageRelease(halfWayImage);

}

Generating a Sequence of Images - 生成一系列图像

To generate a series of images, you send the image generator a generateCGImagesAsynchronouslyForTimes:completionHandler: message. The first argument is an array of NSValue objects, each containing a CMTime structure, specifying the asset times for which you want images to be generated. The second argument is a block that serves as a callback invoked for each image that is generated. The block arguments provide a result constant that tells you whether the image was created successfully or if the operation was canceled, and, as appropriate:

- The image

- The time for which you requested the image and the actual time for which the image was generated

- An error object that describes the reason generation failed

In your implementation of the block, check the result constant to determine whether the image was created. In addition, ensure that you keep a strong reference to the image generator until it has finished creating the images.

生成一系列图像,可以给图像生成器发送 generateCGImagesAsynchronouslyForTimes:completionHandler: 消息。第一个参数是一个 NSValue 对象的数组,每个都包含一个 CMTime 结构体,指定了图像想要被生成的 asset 时间。block 参数提供了一个结果,这个结果包含了告诉你是否图像被成功生成,或者操作某些情况下被取消。结果:

- 图像

- 你要求的图像和图像生成的实际时间

- 一个

error对象,描述了生成失败的原因

在 block 的实现中,检查结果常数,来确定图像是否被创建。此外,在完成创建图像之前,确保保持一个强引用给图像生成器。

AVAsset *myAsset = <#An asset#>];

// Assume: @property (strong) AVAssetImageGenerator *imageGenerator;

self.imageGenerator = [AVAssetImageGenerator assetImageGeneratorWithAsset:myAsset];

Float64 durationSeconds = CMTimeGetSeconds([myAsset duration]);

CMTime firstThird = CMTimeMakeWithSeconds(durationSeconds/3.0, 600);

CMTime secondThird = CMTimeMakeWithSeconds(durationSeconds*2.0/3.0, 600);

CMTime end = CMTimeMakeWithSeconds(durationSeconds, 600);

NSArray *times = @[NSValue valueWithCMTime:kCMTimeZero],

[NSValue valueWithCMTime:firstThird], [NSValue valueWithCMTime:secondThird],

[NSValue valueWithCMTime:end]];

[imageGenerator generateCGImagesAsynchronouslyForTimes:times

completionHandler:^(CMTime requestedTime, CGImageRef image, CMTime actualTime,

AVAssetImageGeneratorResult result, NSError *error) {

NSString *requestedTimeString = (NSString *)

CFBridgingRelease(CMTimeCopyDescription(NULL, requestedTime));

NSString *actualTimeString = (NSString *)

CFBridgingRelease(CMTimeCopyDescription(NULL, actualTime));

NSLog(@"Requested: %@; actual %@", requestedTimeString, actualTimeString);

if (result == AVAssetImageGeneratorSucceeded) {

// Do something interesting with the image.

}

if (result == AVAssetImageGeneratorFailed) {

NSLog(@"Failed with error: %@", [error localizedDescription]);

}

if (result == AVAssetImageGeneratorCancelled) {

NSLog(@"Canceled");

}

}];

You can cancel the generation of the image sequence by sending the image generator a cancelAllCGImageGeneration message.

你发送给图像生成器一个 cancelAllCGImageGeneration 消息,可以取消队列中的图像生成。

Trimming and Transcoding a Movie - 微调和转化为一个电影

You can transcode a movie from one format to another, and trim a movie, using an AVAssetExportSession object. The workflow is shown in Figure 1-1. An export session is a controller object that manages asynchronous export of an asset. You initialize the session using the asset you want to export and the name of a export preset that indicates the export options you want to apply (see allExportPresets). You then configure the export session to specify the output URL and file type, and optionally other settings such as the metadata and whether the output should be optimized for network use.

asset一律使用 “资产” 代码,切换还要加“略麻烦

你可以使用 AVAssetExportSession 对象,将一个电影的编码进行转换,并且对电影进行微调。工作流程如图 1-1 所示。一个 export session 是一个控制器对象,管理一个资产的异步导出。使用想要导出的资产初始化一个 session 和输出设定的名称,这个输出设定表明你想申请的导出选项(allExportPresets)。然后配置导出会话去指定输出的 URL 和文件类型,以及其他可选的设定,比如元数据,是否将输出优化用于网络使用。

You can check whether you can export a given asset using a given preset using exportPresetsCompatibleWithAsset: as illustrated in this example:

你可以检查你能否用给定的预设导出一个给定的资产,使用 exportPresetsCompatibleWithAsset: 作为示例。

AVAsset *anAsset = <#Get an asset#>;

NSArray *compatiblePresets = [AVAssetExportSession exportPresetsCompatibleWithAsset:anAsset];

if ([compatiblePresets containsObject:AVAssetExportPresetLowQuality]) {

AVAssetExportSession *exportSession = [[AVAssetExportSession alloc]

initWithAsset:anAsset presetName:AVAssetExportPresetLowQuality];

// Implementation continues.

}You complete the configuration of the session by providing the output URL (The URL must be a file URL.) AVAssetExportSession can infer the output file type from the URL’s path extension; typically, however, you set it directly using outputFileType. You can also specify additional properties such as the time range, a limit for the output file length, whether the exported file should be optimized for network use, and a video composition. The following example illustrates how to use the timeRange property to trim the movie:

完成会话的配置,是由输出的 URL (URL 必须是文件的 URL)控制的。AVAssetExportSession 可以从 URL 的路径延伸推断输出文件的类型。然而通常情况下,直接使用 outputFileType 设定。还可以指定附加属性,如时间范围、输出文件长度的限制、导出的文件是否应该为了网络使用而优化、还有一个视频的构成。下面的示例展示了如果使用 timeRange 属性修剪电影。

exportSession.outputURL = <#A file URL#>;

exportSession.outputFileType = AVFileTypeQuickTimeMovie;

CMTime start = CMTimeMakeWithSeconds(1.0, 600);

CMTime duration = CMTimeMakeWithSeconds(3.0, 600);

CMTimeRange range = CMTimeRangeMake(start, duration);

exportSession.timeRange = range;To create the new file, you invoke exportAsynchronouslyWithCompletionHandler:. The completion handler block is called when the export operation finishes; in your implementation of the handler, you should check the session’s status value to determine whether the export was successful, failed, or was canceled:

调用 exportAsynchronouslyWithCompletionHandler: 创建新的文件。当导出操作完成的时候完成处理的 block 被调用,你应该检查会话的 status 值,去判断导出是否成功、失败或者被取消。

[exportSession exportAsynchronouslyWithCompletionHandler:^{

switch ([exportSession status]) {

case AVAssetExportSessionStatusFailed:

NSLog(@"Export failed: %@", [[exportSession error] localizedDescription]);

break;

case AVAssetExportSessionStatusCancelled:

NSLog(@"Export canceled");

break;

default:

break;

}

}];You can cancel the export by sending the session a cancelExport message.

The export will fail if you try to overwrite an existing file, or write a file outside of the application’s sandbox. It may also fail if:

- There is an incoming phone call

- Your application is in the background and another application starts playback

In these situations, you should typically inform the user that the export failed, then allow the user to restart the export.

你可以通过给会话发送一个 cancelExport 消息来取消导出。

如果你尝试覆盖一个现有的文件或者在应用程序的沙盒外部写一个文件,都将会是导出失败。如果发生下面情况也可能失败:

- 有一个来电

- 你的应用程序在后台并且另一个程序开始播放

在这种情况下,你通常应该通知用户导出失败,然后允许用户重新启动导出。

Playback - 播放

To control the playback of assets, you use an AVPlayer object. During playback, you can use an AVPlayerItem instance to manage the presentation state of an asset as a whole, and an AVPlayerItemTrack object to manage the presentation state of an individual track. To display video, you use an AVPlayerLayer object.

使用 AVPlayer 对象控制资产的播放。在播放期间,可以使用一个 AVPlayerItem 实例去管理资产作为一个整体的显示状态,AVPlayerItemTrack 对象来管理一个单独轨道的显示状态。使用 AVPlayerLayer 显示视频。

Playing Assets - 播放资产

A player is a controller object that you use to manage playback of an asset, for example starting and stopping playback, and seeking to a particular time. You use an instance of AVPlayer to play a single asset. You can use an AVQueuePlayer object to play a number of items in sequence (AVQueuePlayer is a subclass of AVPlayer). On OS X you have the option of the using the AVKit framework’s AVPlayerView class to play the content back within a view.

播放器是一个控制器对象,使用这个控制器对象去管理一个资产的播放,例如开始和停止播放,并且追踪一个特定的时间。使用 AVPlayer 的实例去播放单个资产。可以使用 AVQueuePlayer 对象去播放在一些在队列的项目(AVQueuePlayer 是 AVPlayer 的子类)。在 OS X 系统中,可以选择使用 AVKit 框架的 AVPlayerView 类去播放一个视图的内容。

A player provides you with information about the state of the playback so, if you need to, you can synchronize your user interface with the player’s state. You typically direct the output of a player to a specialized Core Animation layer (an instance of AVPlayerLayer or AVSynchronizedLayer). To learn more about layers, see Core Animation Programming Guide.

播放器提供了关于播放状态的信息,因此如果需要,可以将用户界面与播放器的状态同步。通常将播放器的输出指向专门的动画核心层(AVPlayerLayer 或者 AVSynchronizedLayer 的一个实例)。想要了解更多关于 layers,请看 Core Animation Programming Guide。

Multiple player layers: You can create many AVPlayerLayer objects from a single AVPlayer instance, but only the most recently created such layer will display any video content onscreen.

多个播放器层:可以从一个单独的

AVPlayer实例创建许多AVPlayerLayer对象,但是只有最近被创建的那一层将会屏幕上显示视频的内容。

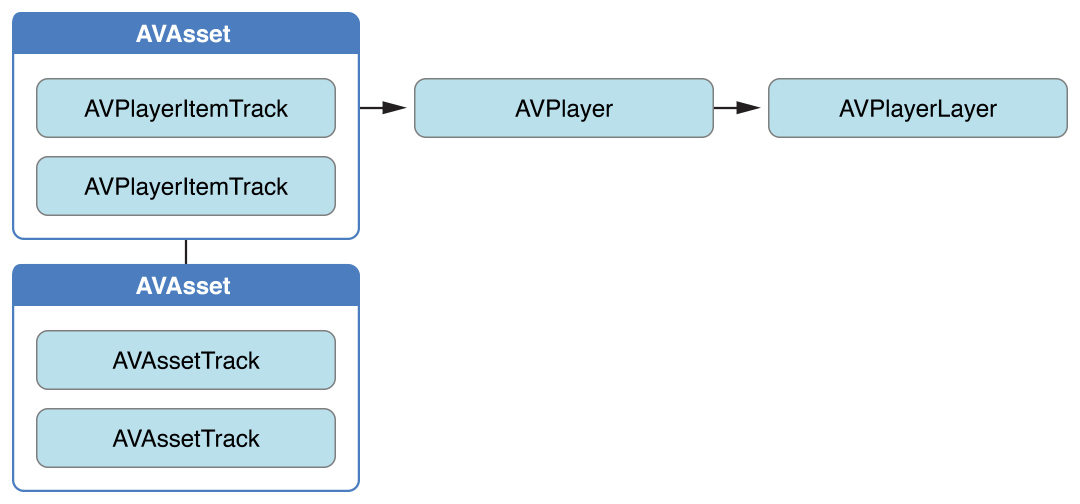

Although ultimately you want to play an asset, you don’t provide assets directly to an AVPlayer object. Instead, you provide an instance of AVPlayerItem. A player item manages the presentation state of an asset with which it is associated. A player item contains player item tracks—instances of AVPlayerItemTrack—that correspond to the tracks in the asset. The relationship between the various objects is shown in Figure 2-1.

虽然最终想要播放一个资产,但又没有直接给提供资产一个 AVPlayer 对象。相反,提供一个 AVPlayerItem 的实例。一个 player item 管理与它相关的资产的显示状态。一个player item包含了播放器项目轨道 – AVPlayerItemTrack—that 的实例,对应资产内的轨道。各个对象之间的关系如图 2-1 所示。

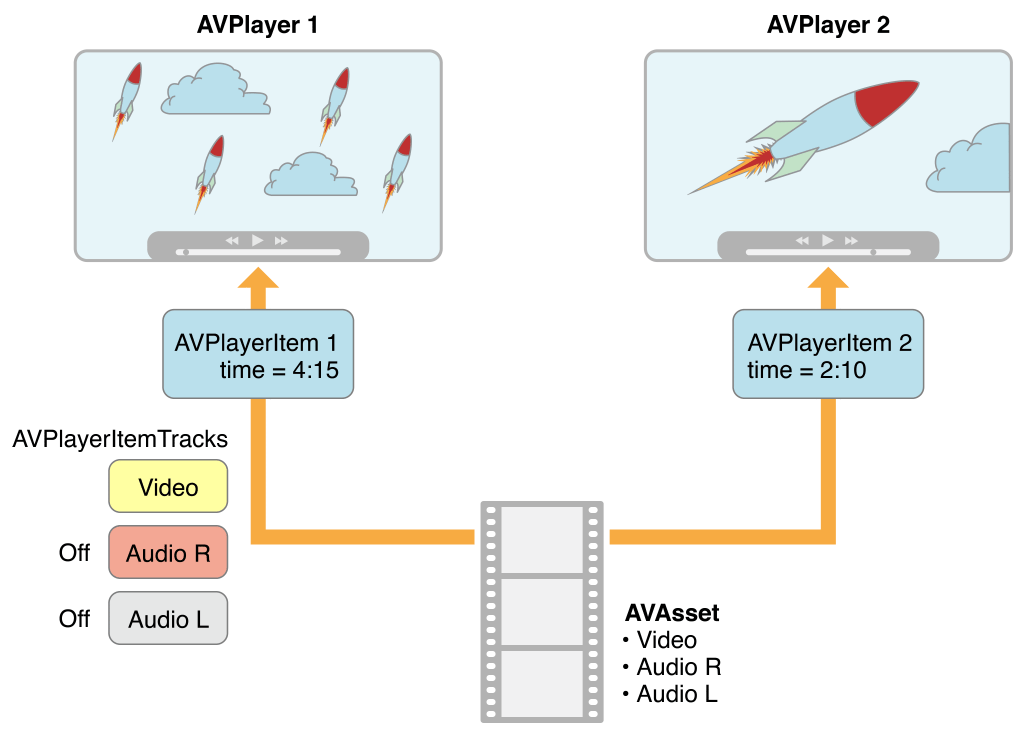

This abstraction means that you can play a given asset using different players simultaneously, but rendered in different ways by each player. Figure 2-2 shows one possibility, with two different players playing the same asset, with different settings. Using the item tracks, you can, for example, disable a particular track during playback (for example, you might not want to play the sound component).

这个摘要意味着可以同时使用不同的播放器播放一个给定的资产,但每个播放器都以不同的方式呈现。图 2-2 显示了一种可能性,同一个资产有两个不同的播放器,并且有不同的设定。可以使用不同的项目轨道,在播放期间禁用一个特定的轨道(例如,你可能不想播放这个声音组件)。

You can initialize a player item with an existing asset, or you can initialize a player item directly from a URL so that you can play a resource at a particular location (AVPlayerItem will then create and configure an asset for the resource). As with AVAsset, though, simply initializing a player item doesn’t necessarily mean it’s ready for immediate playback. You can observe (using key-value observing) an item’s status property to determine if and when it’s ready to play.

可以用现有的资产初始化一个播放器项目,或者可以直接从一个 URL 初始化播放器项目,为了可以在一个特定位置播放一个资源(AVPlayerItem 将为资源创建和配置资产)。即使带着 AVAsset 简单地初始化一个播放器项目并不一定意味着它已经准备可以立即播放了。可以观察(使用 key-value observing])一个项目的 status 属性,以确定是否可以播放并且当已经准备好去播放。

Handling Different Types of Asset - 处理不同类型的资产

The way you configure an asset for playback may depend on the sort of asset you want to play. Broadly speaking, there are two main types: file-based assets, to which you have random access (such as from a local file, the camera roll, or the Media Library), and stream-based assets (HTTP Live Streaming format).

配置一个准备播放的资产的方法可能取决于你想播放的资产的顺序。概括地说,主要由两种类型:基于文件的资产,可以随机访问(例如从一个本地文件,相机胶卷,或者媒体库),和基于流的资产(HTTP 直播流媒体格式)。

To load and play a file-based asset. There are several steps to playing a file-based asset:

- Create an asset using AVURLAsset.

- Create an instance of AVPlayerItem using the asset.

- Associate the item with an instance of AVPlayer.

- Wait until the item’s status property indicates that it’s ready to play (typically you use key-value observing to receive a notification when the status changes).

This approach is illustrated in Putting It All Together: Playing a Video File Using AVPlayerLayer.

To create and prepare an HTTP live stream for playback. Initialize an instance of AVPlayerItem using the URL. (You cannot directly create an AVAsset instance to represent the media in an HTTP Live Stream.)

加载和播放一个基于文件的资产,播放基于文件的资产有几个步骤:

- 使用 AVURLAsset 创建一个资产

- 使用资产创建一个

AVPlayerItem的实例 - 将

AVPlayer的实例与项目联结 - 等待,直到项目的

status属性表明已经准备好播放了(通常当状态改变时,使用 key-value observing 接受通知)

该方法的说明都在:Putting It All Together: Playing a Video File Using AVPlayerLayer

创建和编写能够播放的 HTTP 直播流媒体。使用 URL 初始化一个 AVPlayerItem 的实例。(你不能直接创建一个 AVAsset 的实例去代表媒体在 HTTP 直播流中)

NSURL *url = [NSURL URLWithString:@"<#Live stream URL#>];

// You may find a test stream at <http://devimages.apple.com/iphone/samples/bipbop/bipbopall.m3u8>.

self.playerItem = [AVPlayerItem playerItemWithURL:url];

[playerItem addObserver:self forKeyPath:@"status" options:0 context:&ItemStatusContext];

self.player = [AVPlayer playerWithPlayerItem:playerItem];When you associate the player item with a player, it starts to become ready to play. When it is ready to play, the player item creates the AVAsset and AVAssetTrack instances, which you can use to inspect the contents of the live stream.

To get the duration of a streaming item, you can observe the duration property on the player item. When the item becomes ready to play, this property updates to the correct value for the stream.

当你把播放项目和播放器联结起来时,它开始准备播放。当它准备播放时,播放项目创建 AVAsset 和 AVAssetTrack 实例,可以用它来检查直播流的内容。

获取一个流项目的持续时间,可以观察播放项目的 duration 属性。当项目准备就绪时,这个属性更新为流的正确值。

Note: Using the duration property on the player item requires iOS 4.3 or later. An approach that is compatible with all versions of iOS involves observing the status property of the player item. When the status becomes AVPlayerItemStatusReadyToPlay, the duration can be fetched with the following line of code:

注意:在播放项目里使用

duration属性要求iOS4.3,或者更高的版本。一种方法是所有版本的 iOS 兼容包括播放项目的 status 属性。当status变成 AVPlayerItemStatusReadyToPlay,持续时间可以被下面的代码获取到:

[[[[[playerItem tracks] objectAtIndex:0] assetTrack] asset] duration];If you simply want to play a live stream, you can take a shortcut and create a player directly using the URL use the following code:

如果你只是想播放一个直播流,你可以采取一种快捷方式,并使用 URL 直接创建一个播放器,代码如下:

self.player = [AVPlayer playerWithURL:<#Live stream URL#>];

[player addObserver:self forKeyPath:@"status" options:0 context:&PlayerStatusContext];As with assets and items, initializing the player does not mean it’s ready for playback. You should observe the player’s status property, which changes to AVPlayerStatusReadyToPlay when it is ready to play. You can also observe the currentItem property to access the player item created for the stream.

作为资产和项目,初始化播放器并不意味着它已经准备就绪可以播放。你应该观察播放器的 status 属性,当准备就绪的时候改变 AVPlayerStatusReadyToPlay 。也可以观察 currentItem 属性去访问被流所创建播放项目。

If you don’t know what kind of URL you have, follow these steps:

- Try to initialize an AVURLAsset using the URL, then load its tracks key.

If the tracks load successfully, then you create a player item for the asset. - If 1 fails, create an AVPlayerItem directly from the URL.

Observe the player’s status property to determine whether it becomes playable.

If either route succeeds, you end up with a player item that you can then associate with a player.

如果你不知道现有的 URL 是什么类型的,按照下面步骤:

- 尝试用

URL初始化一个AVURLAsset,然后将其加载为轨道的key。 - 如果上一步失败,直接从

URL创建一个AVPlayerItem。观察这个播放器的 status 属性来决定它是否是可播放的。

如果两个都可以成功,你最终用可以联结给一个播放器的播放项目。

Playing an Item - 播放一个项目

To start playback, you send a play message to the player.

发送一个播放消息给播放器,开始播放:

- (IBAction)play:sender {

[player play];

}In addition to simply playing, you can manage various aspects of the playback, such as the rate and the location of the playhead. You can also monitor the play state of the player; this is useful if you want to, for example, synchronize the user interface to the presentation state of the asset—see Monitoring Playback.

除了简单的播放,可以管理播放的各个方面,如速度和播放头的位置。也可以监视播放器的播放状态;这是很有用的,例如如果你想将用户界面同步到资产的呈现状态 – 详情看:Monitoring Playback.

Changing the Playback Rate - 改变播放的速率

You change the rate of playback by setting the player’s rate property.

通过发送播放器的 rate 属性来改变播放速率。

aPlayer.rate = 0.5;

aPlayer.rate = 2.0;A value of 1.0 means “play at the natural rate of the current item”. Setting the rate to 0.0 is the same as pausing playback—you can also use pause.

值如果是 1.0 意味着 “当前项目按正常速率播放”。将速率设置为 0.0 就和暂停播放一样了 – 也可以使用 pause

Items that support reverse playback can use the rate property with a negative number to set the reverse playback rate. You determine the type of reverse play that is supported by using the playerItem properties canPlayReverse (supports a rate value of -1.0), canPlaySlowReverse (supports rates between 0.0 and 1.0) and canPlayFastReverse (supports rate values less than -1.0).

支持逆向播放的项目可以使用带有负数 rate 属性,负数可以设置反向播放速率。确定反向播放的类型,通过使用 playerItem 属性 canPlayReverse (支持一个速率值 -1.0),canPlaySlowReverse (速率支持0.0 到 1.0)和 canPlayFastReverse (速率值可以小于 -1.0)。

Seeking—Repositioning the Playhead - 寻找 - 重新定位播放头

To move the playhead to a particular time, you generally use seekToTime: as follows:

通常使用 seekToTime: 把播放头移动到一个指定的时间,示例:

CMTime fiveSecondsIn=CMTimeMake(5, 1);

[player seekToTime:fiveSecondsIn];The seekToTime: method, however, is tuned for performance rather than precision. If you need to move the playhead precisely, instead you use seekToTime:toleranceBefore:toleranceAfter: as in the following code fragment:

然而 seekToTime: 方法是为了性能的调试,而不是精度。如果你需要精确的移动播放头,你需要使用 seekToTime:toleranceBefore:toleranceAfter: 代替,示例代码:

CMTime fiveSecondsIn=CMTimeMake(5, 1);

[player seekToTime:fiveSecondsIn toleranceBefore:kCMTimeZero toleranceAfter:kCMTimeZero];Using a tolerance of zero may require the framework to decode a large amount of data. You should use zero only if you are, for example, writing a sophisticated media editing application that requires precise control.

After playback, the player’s head is set to the end of the item and further invocations of play have no effect. To position the playhead back at the beginning of the item, you can register to receive an AVPlayerItemDidPlayToEndTimeNotification notification from the item. In the notification’s callback method, you invoke seekToTime: with the argument kCMTimeZero.

使用一个零的限制可能需要框架来解码大量的数据。例如应该只是用零编写一个复杂的需要精确控制的媒体编辑应用。

播放之后,播放器的头被设置在项目的结尾处,接着进行播放的调用没有任何影响。将播放头放置在项目的开始位置,可以注册从项目接收一个 AVPlayerItemDidPlayToEndTimeNotification 消息。在消息的回调方法中,调用带着参数 kCMTimeZero 的 seekToTime: 方法。

// Register with the notification center after creating the player item.

[[NSNotificationCenter defaultCenter]

addObserver:self

selector:@selector(playerItemDidReachEnd:)

name:AVPlayerItemDidPlayToEndTimeNotification

object:<#The player item#>];

- (void)playerItemDidReachEnd:(NSNotification *)notification {

[player seekToTime:kCMTimeZero];

}

Playing Multiple Items - 播放多个项目

You can use an AVQueuePlayer object to play a number of items in sequence. The AVQueuePlayer class is a subclass of AVPlayer. You initialize a queue player with an array of player items.

可以使用 AVQueuePlayer 对象去播放队列中的一些项目。AVQueuePlayer 类是 AVPlayer 的子类。初始化一个带着播放项目数组的队列播放器:

NSArray *items = <#An array of player items#>;

AVQueuePlayer *queuePlayer = [[AVQueuePlayer alloc] initWithItems:items];You can then play the queue using play, just as you would an AVPlayer object. The queue player plays each item in turn. If you want to skip to the next item, you send the queue player an advanceToNextItem message.

可以使用 play 播放队列,就像你是一个 AVPlayer 对象。队列播放器依次播放每个项目。如果想要跳过这一项,给队列播放器发送一个 advanceToNextItem 信息。

You can modify the queue using insertItem:afterItem:, removeItem:, and removeAllItems. When adding a new item, you should typically check whether it can be inserted into the queue, using canInsertItem:afterItem:. You pass nil as the second argument to test whether the new item can be appended to the queue.

可以使用 insertItem:afterItem: ,removeItem: 和 removeAllItems 这三个方法修改队列。当添加一个新项目,通常应该检查它是否可以被插入到队列中,使用 canInsertItem:afterItem:。传 nil 作为第二个参数去测试是否将新项目添加到队列中。

AVPlayerItem *anItem = <#Get a player item#>;

if ([queuePlayer canInsertItem:anItem afterItem:nil]) {

[queuePlayer insertItem:anItem afterItem:nil];

}

Monitoring Playback - 监视播放

You can monitor a number of aspects of both the presentation state of a player and the player item being played. This is particularly useful for state changes that are not under your direct control. For example:

- If the user uses multitasking to switch to a different application, a player’s rate property will drop to 0.0.

- If you are playing remote media, a player item’s loadedTimeRanges and seekableTimeRanges properties will change as more data becomes available.

These properties tell you what portions of the player item’s timeline are available.

- A player’s currentItem property changes as a player item is created for an HTTP live stream.

- A player item’s tracks property may change while playing an HTTP live stream.

This may happen if the stream offers different encodings for the content; the tracks change if the player switches to a different encoding.

- A player or player item’s status property may change if playback fails for some reason.

You can use key-value observing to monitor changes to values of these properties.

可以监视播放器的演示状态和正在播放的播放项目的很多方面的情况。状态的改变并不是在你的直接控制下,监视是非常有用的。例如:

- 如果用户使用多任务处理切换到另一个应用程序,播放器的 rate 属性将下降到

0.0。 - 如果正在播放远程媒体,播放项目的 loadedTimeRanges 和 seekableTimeRanges 属性将会改变使得更多的数据成为可用的。

这些属性告诉你,播放项目时间轴的那一部分是可用的。

- 播放器的 currentItem 属性变化,随着播放项目被

HTTP直播流创建。 - 当播放

HTTP直播流时,播放项目的 tracks 属性可能会改变。

如果流的内容提供了不同的编码上述情况就可能发生;如果用户切换到不同的编码轨道就改变了。

- 如果因为一些原因播放失败,播放器或者播放项目的 status 属性可能会改变。

可以使用 key-value observing 去监视这些属性值的改变。

Important: You should register for KVO change notifications and unregister from KVO change notifications on the main thread. This avoids the possibility of receiving a partial notification if a change is being made on another thread. AV Foundation invokes observeValueForKeyPath:ofObject:change:context: on the main thread, even if the change operation is made on another thread.

重要的是:你应该对

KVO改变通知登记,从主线程中KVO改变通知而注销。如果在另一个线程上正在更改,这避免了只接受到部分通知的可能性。AV Foundation在主线程中调用 observeValueForKeyPath:ofObject:change:context: ,即使改变操作是在另一个线程中。

Responding to a Change in Status - 响应状态的变化

When a player or player item’s status changes, it emits a key-value observing change notification. If an object is unable to play for some reason (for example, if the media services are reset), the status changes to AVPlayerStatusFailed or AVPlayerItemStatusFailed as appropriate. In this situation, the value of the object’s error property is changed to an error object that describes why the object is no longer be able to play.

当一个播放器或者播放项目的 status 改变,它会发出一个 key-value observing 改变通知。如果一个对象由于一些原因不能播放(例如,如果媒体服务器复位),status 适当的改变为 AVPlayerStatusFailed 或者 AVPlayerItemStatusFailed。在这种情况下,对象的 error 属性的值被更改为一个错误对象,该对象描述了为什么对象不能播放了。

AV Foundation does not specify what thread that the notification is sent on. If you want to update the user interface, you must make sure that any relevant code is invoked on the main thread. This example uses dispatch_async to execute code on the main thread.

AV Foundation 没有指定通知发送的是什么线程。如果要更新用户界面,必须确保相关的代码都是在主线程被调用的。这个例子使用了 dispatch_async 去执行在主线程中的代码。

- (void)observeValueForKeyPath:(NSString *)keyPath ofObject:(id)object

change:(NSDictionary *)change context:(void *)context {

if (context == <#Player status context#>) {

AVPlayer *thePlayer = (AVPlayer *)object;

if ([thePlayer status] == AVPlayerStatusFailed) {

NSError *error = [<#The AVPlayer object#> error];

// Respond to error: for example, display an alert sheet.

return;

}

// Deal with other status change if appropriate.

}

// Deal with other change notifications if appropriate.

[super observeValueForKeyPath:keyPath ofObject:object

change:change context:context];

return;

}

Tracking Readiness for Visual Display - 为视觉展示做追踪准备

You can observe an AVPlayerLayer object’s readyForDisplay property to be notified when the layer has user-visible content. In particular, you might insert the player layer into the layer tree only when there is something for the user to look at and then perform a transition from.

可以观察一个 AVPlayerLayer 对象的 readyForDisplay 属性,当层有了用户可见的内容时属性可以被通知。特别是,可能将播放器层插入到层树,只有当有东西给用户看的时候,在从里面执行一个转变。

Tracking Time - 追踪时间

To track changes in the position of the playhead in an AVPlayer object, you can use addPeriodicTimeObserverForInterval:queue:usingBlock: or addBoundaryTimeObserverForTimes:queue:usingBlock:. You might do this to, for example, update your user interface with information about time elapsed or time remaining, or perform some other user interface synchronization.

- With addPeriodicTimeObserverForInterval:queue:usingBlock:, the block you provide is invoked at the interval you specify, if time jumps, and when playback starts or stops.

- With addBoundaryTimeObserverForTimes:queue:usingBlock:, you pass an array of CMTime structures contained in NSValue objects. The block you provide is invoked whenever any of those times is traversed.

追踪一个 AVPlayer 对象中播放头位置的变化,可以使用 addPeriodicTimeObserverForInterval:queue:usingBlock: 或者 addBoundaryTimeObserverForTimes:queue:usingBlock: 。可以这样做,例如更新用户界面与时间消耗或者剩余时间的有关信息,或者执行一些其他用户界面的同步。

- 有关 addBoundaryTimeObserverForTimes:queue:usingBlock: ,你提供的块在你指定的时间间隔内被调用,如果时间有跳跃,那就在播放开始或者结束的时候。

- 有关 addBoundaryTimeObserverForTimes:queue:usingBlock:,传递一个

CMTime结构体的数组,包含在NSValue对象中。任何这些时间被遍历的时候你提供的块都会被调用。

Both of the methods return an opaque object that serves as an observer. You must keep a strong reference to the returned object as long as you want the time observation block to be invoked by the player. You must also balance each invocation of these methods with a corresponding call to removeTimeObserver:.

With both of these methods, AV Foundation does not guarantee to invoke your block for every interval or boundary passed. AV Foundation does not invoke a block if execution of a previously invoked block has not completed. You must make sure, therefore, that the work you perform in the block does not overly tax the system.

这两种方法都返回一个作为观察者的不透明对象。只要你希望播放器调用时间观察的块,就必须对返回的对象保持一个强引用。你也必须平衡每次调用这些方法,与相应的调用 removeTimeObserver:.

有了这两种方法, AV Foundation 不保证每个间隔或者通过边界时都调用你的块。如果以前调用的块执行没有完成,AV Foundation不会调用块。因此必须确保你在该块中执行的工作不会对系统过载。

// Assume a property: @property (strong) id playerObserver;

Float64 durationSeconds = CMTimeGetSeconds([<#An asset#> duration]);

CMTime firstThird = CMTimeMakeWithSeconds(durationSeconds/3.0, 1);

CMTime secondThird = CMTimeMakeWithSeconds(durationSeconds*2.0/3.0, 1);

NSArray *times = @[[NSValue valueWithCMTime:firstThird], [NSValue valueWithCMTime:secondThird]];

self.playerObserver = [<#A player#> addBoundaryTimeObserverForTimes:times queue:NULL usingBlock:^{

NSString *timeDescription = (NSString *)

CFBridgingRelease(CMTimeCopyDescription(NULL, [self.player currentTime]));

NSLog(@"Passed a boundary at %@", timeDescription);

}];Reaching the End of an Item - 到达一个项目的结束

You can register to receive an AVPlayerItemDidPlayToEndTimeNotification notification when a player item has completed playback.

当一个播放项目已经完成播放的时候,可以注册接收一个 AVPlayerItemDidPlayToEndTimeNotification 通知。

[[NSNotificationCenter defaultCenter] addObserver:<#The observer, typically self#> selector:@selector(<#The selector name#>) name:AVPlayerItemDidPlayToEndTimeNotification object:<#A player item#>];Putting It All Together: Playing a Video File Using AVPlayerLayer - 总而言之,使用 AVPlayerLayer 播放视频文件

This brief code example illustrates how you can use an AVPlayer object to play a video file. It shows how to:

- Configure a view to use an AVPlayerLayer layer

- Create an AVPlayer object

- Create an AVPlayerItem object for a file-based asset and use key-value observing to observe its status

- Respond to the item becoming ready to play by enabling a button

- Play the item and then restore the player’s head to the beginning

这个简短的代码示例演示如何使用一个 AVPlayer 对象播放一个视频文件。它显示了如何:

- 使用

AVPlayerLayer层配置视图 - 创建一个

AVPlayer对象 - 创建一个基于文件资产的

AVPlayerItem对象和使用key-value observing去观察它的状态 - 通过启用按钮来响应项目准备就绪播放

- 播放项目,然后将播放器的头重置到开始位置

Note: To focus on the most relevant code, this example omits several aspects of a complete application, such as memory management and unregistering as an observer (for key-value observing or for the notification center). To use AV Foundation, you are expected to have enough experience with Cocoa to be able to infer the missing pieces.

注意:关注最相关的代码,这个例子中省略了一个完整应用程序的几个方面,比如内存管理和注销观察者(

key-value observing或者notification center)。为了使用AV Foundation,你应该有足够的Cocoa经验,有能力去推断出丢失的碎片。

For a conceptual introduction to playback, skip to Playing Assets.

对于播放的概念性的介绍,跳去看 Playing Assets。

The Player View - 播放器视图

To play the visual component of an asset, you need a view containing an AVPlayerLayer layer to which the output of an AVPlayer object can be directed. You can create a simple subclass of UIView to accommodate this:

播放一个资产的可视化部分,需要一个包含了 AVPlayerLayer 层的视图,AVPlayerLayer 层可以直接输出 AVPlayer 对象。可以创建一个 UIView 的简单子类来容纳:

#import <UIKit/UIKit.h>

#import <AVFoundation/AVFoundation.h>

@interface PlayerView : UIView

@property (nonatomic) AVPlayer *player;

@end

@implementation PlayerView

+ (Class)layerClass {

return [AVPlayerLayer class];

}

- (AVPlayer*)player {

return [(AVPlayerLayer *)[self layer] player];

}

- (void)setPlayer:(AVPlayer *)player {

[(AVPlayerLayer *)[self layer] setPlayer:player];

}

@end

A Simple View Controller - 一个简单的 View Controller

Assume you have a simple view controller, declared as follows:

假设你有一个简单的 view controller,声明如下:

@class PlayerView;

@interface PlayerViewController : UIViewController

@property (nonatomic) AVPlayer *player;

@property (nonatomic) AVPlayerItem *playerItem;

@property (nonatomic, weak) IBOutlet PlayerView *playerView;

@property (nonatomic, weak) IBOutlet UIButton *playButton;

- (IBAction)loadAssetFromFile:sender;

- (IBAction)play:sender;

- (void)syncUI;

@endThe syncUI method synchronizes the button’s state with the player’s state:

syncUI 方法同步按钮状态和播放器的状态:

- (void)syncUI {

if ((self.player.currentItem != nil) &&

([self.player.currentItem status] == AVPlayerItemStatusReadyToPlay)) {

self.playButton.enabled = YES;

}

else {

self.playButton.enabled = NO;

}

}You can invoke syncUI in the view controller’s viewDidLoad method to ensure a consistent user interface when the view is first displayed.

当视图第一次显示的时候,可以在视图控制器的 viewDidLoad 方法中调用 invoke 去确保用户界面的一致性。

- (void)viewDidLoad {

[super viewDidLoad];

[self syncUI];

}The other properties and methods are described in the remaining sections.

在其余章节描述其他属性和方法。

Creating the Asset - 创建一个资产

You create an asset from a URL using AVURLAsset. (The following example assumes your project contains a suitable video resource.)

使用 AVURLAsset 从一个 URL 创建一个资产。(下面的例子假设你的工程包含了一个合适的视频资源)

- (IBAction)loadAssetFromFile:sender {

NSURL *fileURL = [[NSBundle mainBundle]

URLForResource:<#@"VideoFileName"#> withExtension:<#@"extension"#>];

AVURLAsset *asset = [AVURLAsset URLAssetWithURL:fileURL options:nil];

NSString *tracksKey = @"tracks";

[asset loadValuesAsynchronouslyForKeys:@[tracksKey] completionHandler:

^{

// The completion block goes here.

}];

}In the completion block, you create an instance of AVPlayerItem for the asset and set it as the player for the player view. As with creating the asset, simply creating the player item does not mean it’s ready to use. To determine when it’s ready to play, you can observe the item’s status property. You should configure this observing before associating the player item instance with the player itself.

You trigger the player item’s preparation to play when you associate it with the player.

在完成块中,为资产创建一个 AVPlayerItem 的实例,并设置它为播放页面的播放器。与创建资产一样,简单地创建播放器项目并不意味着它已经准备好使用。为了确定它已经准备好了,可以观察项目的 status 属性。你应该在该播放器项目实例与播放器本身关联之前,配置这个 observing 。

当你将它与播放器连接时,就是触发播放项目的播放准备。

// Define this constant for the key-value observation context.

static const NSString *ItemStatusContext;

// Completion handler block.

dispatch_async(dispatch_get_main_queue(),

^{

NSError *error;

AVKeyValueStatus status = [asset statusOfValueForKey:tracksKey error:&error];

if (status == AVKeyValueStatusLoaded) {

self.playerItem = [AVPlayerItem playerItemWithAsset:asset];

// ensure that this is done before the playerItem is associated with the player

[self.playerItem addObserver:self forKeyPath:@"status"

options:NSKeyValueObservingOptionInitial context:&ItemStatusContext];

[[NSNotificationCenter defaultCenter] addObserver:self

selector:@selector(playerItemDidReachEnd:)

name:AVPlayerItemDidPlayToEndTimeNotification

object:self.playerItem];

self.player = [AVPlayer playerWithPlayerItem:self.playerItem];

[self.playerView setPlayer:self.player];

}

else {

// You should deal with the error appropriately.

NSLog(@"The asset's tracks were not loaded:\n%@", [error localizedDescription]);

}

});Responding to the Player Item’s Status Change - 相应播放项目的状态改变

When the player item’s status changes, the view controller receives a key-value observing change notification. AV Foundation does not specify what thread that the notification is sent on. If you want to update the user interface, you must make sure that any relevant code is invoked on the main thread. This example uses dispatch_async to queue a message on the main thread to synchronize the user interface.

当播放项目的状态改变时,视图控制器接收一个 key-value observing 改变通知。AV Foundation 没有指定通知发送的是什么线程。如果你想更新用户界面,必须确保任何相关的代码都要在主线程中调用。这个例子使用 dispatch_async 让主线程同步用户界面的消息进入队列。

Playing the Item - 播放项目

Playing the item involves sending a play message to the player.

播放项目涉及到想播放器发送一个播放消息。

- (IBAction)play:sender {

[player play];

}The item is played only once. After playback, the player’s head is set to the end of the item, and further invocations of the play method will have no effect. To position the playhead back at the beginning of the item, you can register to receive an AVPlayerItemDidPlayToEndTimeNotification from the item. In the notification’s callback method, invoke seekToTime: with the argument kCMTimeZero.

该项目只播放一次。播放之后,播放器的头被设置在项目的结束位置,播放方法进一步调用将没有效果。将播放头放在项目的开始,可以注册从项目去接收 AVPlayerItemDidPlayToEndTimeNotification。在通知的回调方法,调用带着参数 kCMTimeZero 的 seekToTime: 方法。

// Register with the notification center after creating the player item.

[[NSNotificationCenter defaultCenter] addObserver:self

selector:@selector(playerItemDidReachEnd:)

name:AVPlayerItemDidPlayToEndTimeNotification

object:[self.player currentItem]];

- (void)playerItemDidReachEnd:(NSNotification *)notification {

[self.player seekToTime:kCMTimeZero];

}Editing - 编辑

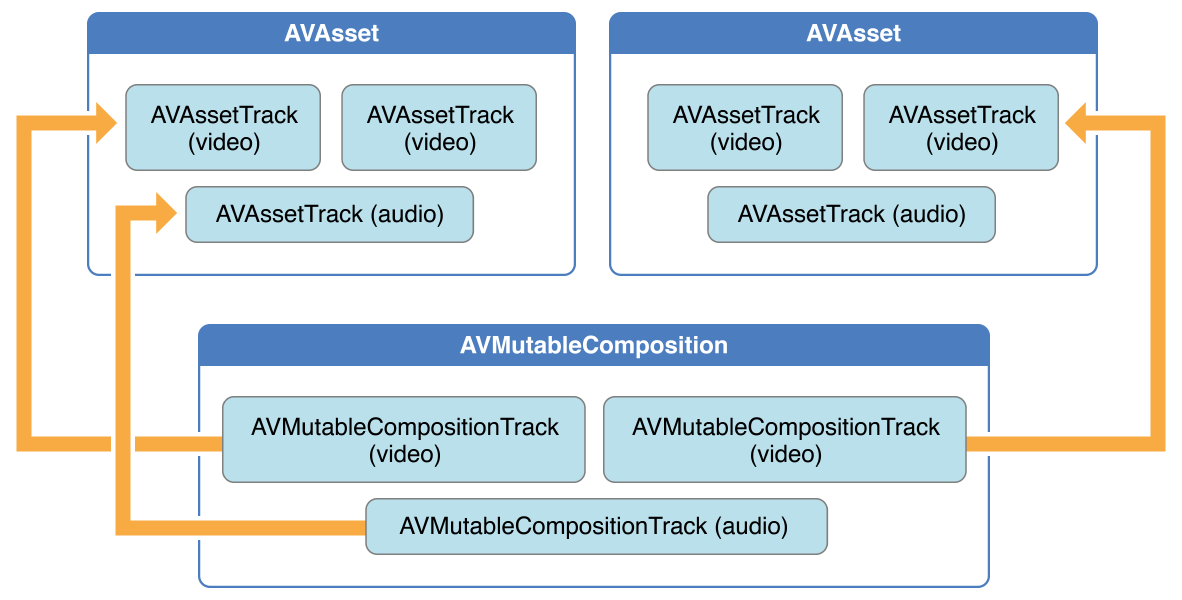

The AVFoundation framework provides a feature-rich set of classes to facilitate the editing of audio visual assets. At the heart of AVFoundation’s editing API are compositions. A composition is simply a collection of tracks from one or more different media assets. The AVMutableComposition class provides an interface for inserting and removing tracks, as well as managing their temporal orderings. Figure 3-1 shows how a new composition is pieced together from a combination of existing assets to form a new asset. If all you want to do is merge multiple assets together sequentially into a single file, that is as much detail as you need. If you want to perform any custom audio or video processing on the tracks in your composition, you need to incorporate an audio mix or a video composition, respectively.

AVFoundation 框架提供了一个功能丰富的类集合去帮助音视频资产的编辑。 AVFoundation

编辑 API 的核心是一些组合。一种组合物是简单的一个或者多个不同媒体资产的轨道的集合。AVMutableComposition 类提供一个可以插入和移除轨道的接口,以及管理它们的时间序列。图 3-1 显示了一个新的组合是怎样从一些现有的资产拼凑起来,形成新的资产。如果你想做的是将多个资产合并为一个单一的文件,这里有尽可能多的你需要掌握的细节。如果你想在你的作品中的轨道上执行任何自定义音频或视频处理,你需要分别将一个音频组合或者视频组成。

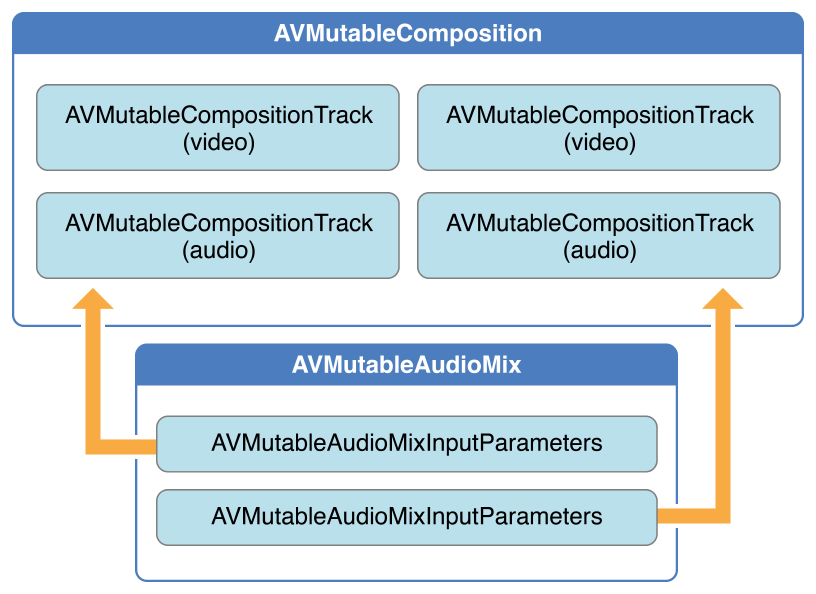

Using the AVMutableAudioMix class, you can perform custom audio processing on the audio tracks in your composition, as shown in Figure 3-2. Currently, you can specify a maximum volume or set a volume ramp for an audio track.

使用 AVMutableAudioMix 类,可以在你作品的音频轨道中执行自定义处理,如图 3-2 所示。目前,你可以指定一个最大音量或设置一个音频轨道的音量斜坡

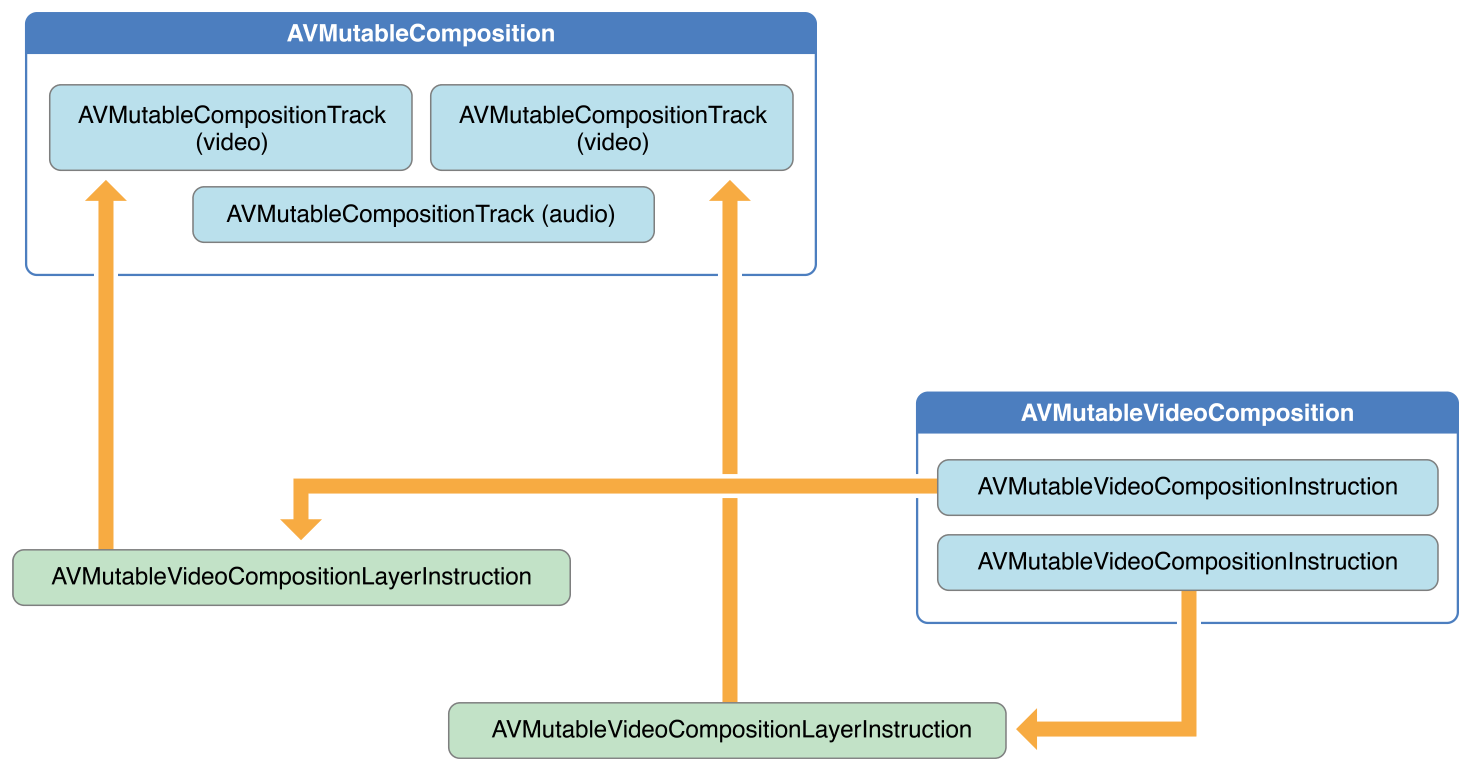

You can use the AVMutableVideoComposition class to work directly with the video tracks in your composition for the purposes of editing, shown in Figure 3-3. With a single video composition, you can specify the desired render size and scale, as well as the frame duration, for the output video. Through a video composition’s instructions (represented by the AVMutableVideoCompositionInstruction class), you can modify the background color of your video and apply layer instructions. These layer instructions (represented by the AVMutableVideoCompositionLayerInstruction class) can be used to apply transforms, transform ramps, opacity and opacity ramps to the video tracks within your composition. The video composition class also gives you the ability to introduce effects from the Core Animation framework into your video using the animationTool property.

可以使用 AVMutableVideoComposition 类直接在视频中跟踪你想编辑的部分,如图 3-3 所示。一个单一的视频组件,可以为输出视频指定所需的渲染大小和规模,以及帧的持续时间。通过视频组件的指令(以 AVMutableVideoCompositionInstruction 类为代表),你可以修改视频的背景颜色和应用层的指令。这些层的指令(以 AVMutableVideoCompositionLayerInstruction 类为代表)可以可应用于应用变换,变换坡道,不透明度以及不透明度的坡道到你的组件中的视频轨道。视频组件类也能让你做一些事,从核心动画框架到使用 animationTool 属性的视频。

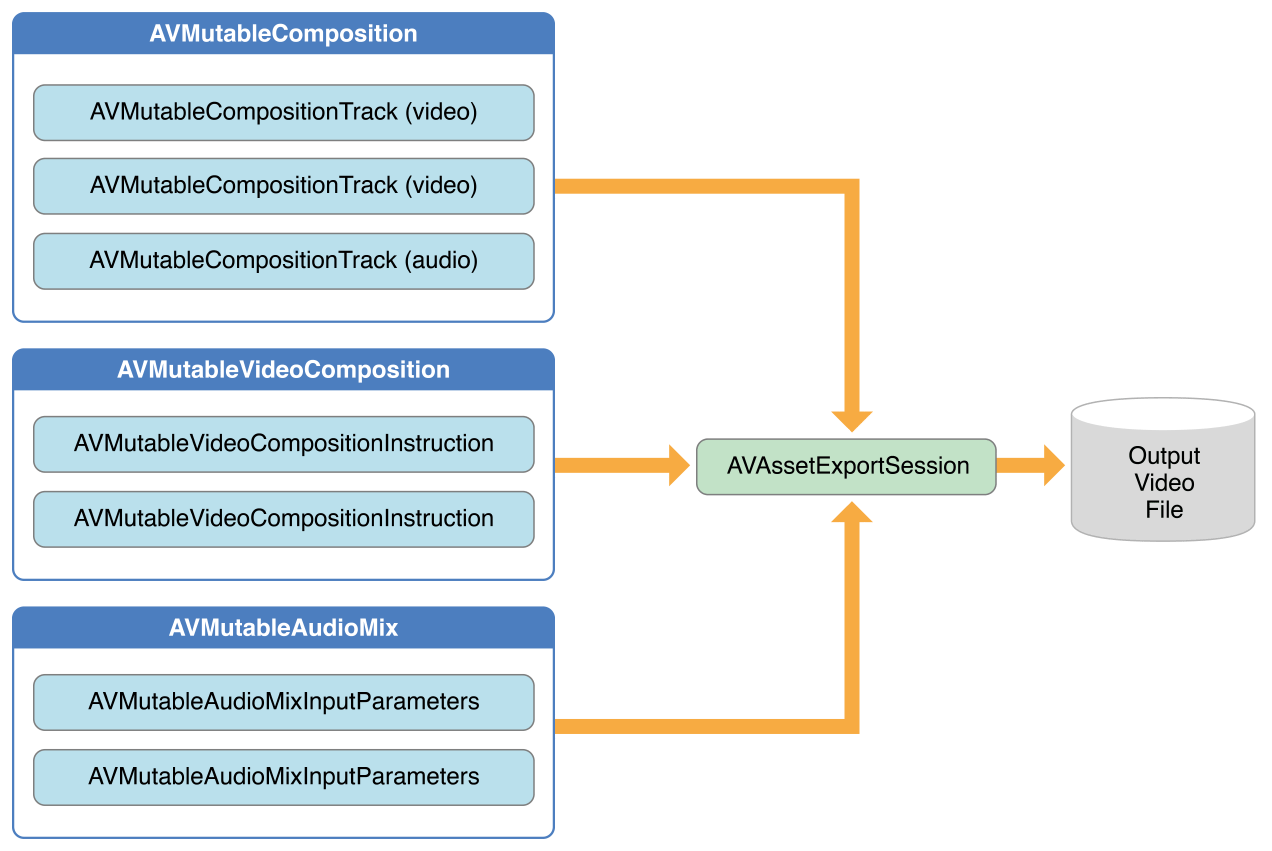

To combine your composition with an audio mix and a video composition, you use an AVAssetExportSession object, as shown in Figure 3-4. You initialize the export session with your composition and then simply assign your audio mix and video composition to the audioMix and videoComposition properties respectively.

将音频和视频的成分组合,可以使用 AVAssetExportSession 对象,如图 3-4 所所示。初始化导出会话,然后简单的分别将音频部分和视频组件分配给 audioMix 和 videoComposition 属性。

Creating a Composition - 创建组件

To create your own composition, you use the AVMutableComposition class. To add media data to your composition, you must add one or more composition tracks, represented by the AVMutableCompositionTrack class. The simplest case is creating a mutable composition with one video track and one audio track:

使用 AVMutableComposition 类创建自己的组件。在你的组件中添加媒体数据,必须添加一个或者多个组件轨道,以 AVMutableCompositionTrack 类为代表。最简单的例子创建一个有一个音频轨道和一个视频轨道的可变组件。

AVMutableComposition *mutableComposition = [AVMutableComposition composition];

// Create the video composition track.

AVMutableCompositionTrack *mutableCompositionVideoTrack = [mutableComposition addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

// Create the audio composition track.

AVMutableCompositionTrack *mutableCompositionAudioTrack = [mutableComposition addMutableTrackWithMediaType:AVMediaTypeAudio preferredTrackID:kCMPersistentTrackID_Invalid];Options for Initializing a Composition Track - 初始化组件轨道的选项

When adding new tracks to a composition, you must provide both a media type and a track ID. Although audio and video are the most commonly used media types, you can specify other media types as well, such as AVMediaTypeSubtitle or AVMediaTypeText.

Every track associated with some audiovisual data has a unique identifier referred to as a track ID. If you specify kCMPersistentTrackID_Invalid as the preferred track ID, a unique identifier is automatically generated for you and associated with the track.

当给轨道添加一个新的轨道时,必须提供媒体类型和轨道 ID 。虽然音频和视频是最常用的媒体类型,你可以指定其他媒体类型,比如 AVMediaTypeSubtitle 或者 AVMediaTypeText 。

每个和视听数据相关联的轨道都有一个唯一的标示符,叫做 track ID。如果你指定了 kCMPersistentTrackID_Invalid 作为首先的 track ID,将会为你生成一个唯一的标示符并且与轨道相关联。

Adding Audiovisual Data to a Composition - 将视听数据添加到一个组件中

Once you have a composition with one or more tracks, you can begin adding your media data to the appropriate tracks. To add media data to a composition track, you need access to the AVAsset object where the media data is located. You can use the mutable composition track interface to place multiple tracks with the same underlying media type together on the same track. The following example illustrates how to add two different video asset tracks in sequence to the same composition track:

一旦有带着一个或多个轨道的组件,就可以把你的媒体数据添加到适当的轨道中。为了将媒体数据添加到组件轨道,需要访问媒体数据所在位置的 AVAsset 对象。可以使用可变组件轨道接口将有相同基础的媒体类型的多个轨道放置到一个轨道上。下面的示例演示了如何将一个队列中两个不同的音频资产轨道添加到同一个组件轨道中。

// You can retrieve AVAssets from a number of places, like the camera roll for example.

AVAsset *videoAsset = <#AVAsset with at least one video track#>;

AVAsset *anotherVideoAsset = <#another AVAsset with at least one video track#>;

// Get the first video track from each asset.

AVAssetTrack *videoAssetTrack = [[videoAsset tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0];

AVAssetTrack *anotherVideoAssetTrack = [[anotherVideoAsset tracksWithMediaType:AVMediaTypeVideo] objectAtIndex:0];

// Add them both to the composition.

[mutableCompositionVideoTrack insertTimeRange:CMTimeRangeMake(kCMTimeZero,videoAssetTrack.timeRange.duration) ofTrack:videoAssetTrack atTime:kCMTimeZero error:nil];

[mutableCompositionVideoTrack insertTimeRange:CMTimeRangeMake(kCMTimeZero,anotherVideoAssetTrack.timeRange.duration) ofTrack:anotherVideoAssetTrack atTime:videoAssetTrack.timeRange.duration error:nil];

Retrieving Compatible Composition Tracks - 检索兼容的组件轨道

Where possible, you should have only one composition track for each media type. This unification of compatible asset tracks leads to a minimal amount of resource usage. When presenting media data serially, you should place any media data of the same type on the same composition track. You can query a mutable composition to find out if there are any composition tracks compatible with your desired asset track:

在可能的情况下,每个媒体类型应该只有一个组件轨道。这种统一兼容的资产轨道可以达到最小的资源使用量。当串行显示媒体数据时,应该将相同类型的媒体数据放置在相同的组件轨道上。你可以查询一个可变组件,找出是否有组件轨道与你想要的资产轨道兼容。

AVMutableCompositionTrack *compatibleCompositionTrack = [mutableComposition mutableTrackCompatibleWithTrack:<#the AVAssetTrack you want to insert#>];

if (compatibleCompositionTrack) {

// Implementation continues.

}Note: Placing multiple video segments on the same composition track can potentially lead to dropping frames at the transitions between video segments, especially on embedded devices. Choosing the number of composition tracks for your video segments depends entirely on the design of your app and its intended platform.

注意:在相同的组件轨道放置多个视频片段,可能会导致在视频片段之间的转换会掉帧,尤其是在嵌入式设备下。你的视频片段的组件轨道数量取决于你的应用程序预期和它的平台设计。

Generating a Volume Ramp - 生成一个音量坡度

A single AVMutableAudioMix object can perform custom audio processing on all of the audio tracks in your composition individually. You create an audio mix using the audioMix class method, and you use instances of the AVMutableAudioMixInputParameters class to associate the audio mix with specific tracks within your composition. An audio mix can be used to vary the volume of an audio track. The following example displays how to set a volume ramp on a specific audio track to slowly fade the audio out over the duration of the composition:

一个单独的 AVMutableAudioMix 对象可以分别执行自定义音频,处理组件中的所有轨道。可以使用 audioMix 类方法创建一个音频混合,使用 AVMutableAudioMixInputParameters 类的实例将混合音频与组件中指定的轨道联结起来。一个混合音频可以用来改变音频轨道的音量。下面的例子展示了,如何在一个指定的音频轨道设置一个音量坡度,使得在组件的持续时间让音频缓慢淡出:

AVMutableAudioMix *mutableAudioMix = [AVMutableAudioMix audioMix];

// Create the audio mix input parameters object.

AVMutableAudioMixInputParameters *mixParameters = [AVMutableAudioMixInputParameters audioMixInputParametersWithTrack:mutableCompositionAudioTrack];

// Set the volume ramp to slowly fade the audio out over the duration of the composition.

[mixParameters setVolumeRampFromStartVolume:1.f toEndVolume:0.f timeRange:CMTimeRangeMake(kCMTimeZero, mutableComposition.duration)];

// Attach the input parameters to the audio mix.

mutableAudioMix.inputParameters = @[mixParameters];Performing Custom Video Processing - 执行自定义配置

As with an audio mix, you only need one AVMutableVideoComposition object to perform all of your custom video processing on your composition’s video tracks. Using a video composition, you can directly set the appropriate render size, scale, and frame rate for your composition’s video tracks. For a detailed example of setting appropriate values for these properties, see Setting the Render Size and Frame Duration.

作为一个混合音频,只需要一个 AVMutableVideoComposition 对象就可以执行组件音频轨道中的所有自定义音频配置。使用一个音频组件,可以直接为组件音频轨道设置适当的渲染大小,规模以及帧速率。有一个设置这些属性值的详细的示例,请看 Setting the Render Size and Frame Duration

Changing the Composition’s Background Color - 改变组件的背景颜色

All video compositions must also have an array of AVVideoCompositionInstruction objects containing at least one video composition instruction. You use the AVMutableVideoCompositionInstruction class to create your own video composition instructions. Using video composition instructions, you can modify the composition’s background color, specify whether post processing is needed or apply layer instructions.

The following example illustrates how to create a video composition instruction that changes the background color to red for the entire composition.

所有的视频组件必须有一个 AVVideoCompositionInstruction 对象的数组,每个对象至少包含一个视频组件指令。使用 AVMutableVideoCompositionInstruction 类去创建自己的视频组件指令。使用视频组件指令,可以修改组件的背景颜色,指定是否需要处理推迟处理或者应用到层指令。

下面的例子展示了如果创建一个视频组件指令,将整个组件的背景颜色改为红色。

AVMutableVideoCompositionInstruction *mutableVideoCompositionInstruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

mutableVideoCompositionInstruction.timeRange = CMTimeRangeMake(kCMTimeZero, mutableComposition.duration);

mutableVideoCompositionInstruction.backgroundColor = [[UIColor redColor] CGColor];Applying Opacity Ramps - 应用不透明的坡道

Video composition instructions can also be used to apply video composition layer instructions. An AVMutableVideoCompositionLayerInstruction object can apply transforms, transform ramps, opacity and opacity ramps to a certain video track within a composition. The order of the layer instructions in a video composition instruction’s layerInstructions array determines how video frames from source tracks should be layered and composed for the duration of that composition instruction. The following code fragment shows how to set an opacity ramp to slowly fade out the first video in a composition before transitioning to the second video:

视频组件指令可以用于视频组件层指令。一个 AVMutableVideoCompositionLayerInstruction 对象可以应用转换,转换坡道,不透明度和坡道的不透明度到某个组件内的视频轨道。视频组件指令的 layerInstructions 数组中 层指令的顺序决定了组件指令期间,资源轨道中的视频框架应该如何被应用和组合。下面的代码展示了如何设置一个不透明的坡度使得第二个视频之前,让第一个视频慢慢淡出:

AVAsset *firstVideoAssetTrack = <#AVAssetTrack representing the first video segment played in the composition#>;

AVAsset *secondVideoAssetTrack = <#AVAssetTrack representing the second video segment played in the composition#>;

// Create the first video composition instruction.

AVMutableVideoCompositionInstruction *firstVideoCompositionInstruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

// Set its time range to span the duration of the first video track.

firstVideoCompositionInstruction.timeRange = CMTimeRangeMake(kCMTimeZero, firstVideoAssetTrack.timeRange.duration);

// Create the layer instruction and associate it with the composition video track.

AVMutableVideoCompositionLayerInstruction *firstVideoLayerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:mutableCompositionVideoTrack];

// Create the opacity ramp to fade out the first video track over its entire duration.

[firstVideoLayerInstruction setOpacityRampFromStartOpacity:1.f toEndOpacity:0.f timeRange:CMTimeRangeMake(kCMTimeZero, firstVideoAssetTrack.timeRange.duration)];

// Create the second video composition instruction so that the second video track isn't transparent.

AVMutableVideoCompositionInstruction *secondVideoCompositionInstruction = [AVMutableVideoCompositionInstruction videoCompositionInstruction];

// Set its time range to span the duration of the second video track.

secondVideoCompositionInstruction.timeRange = CMTimeRangeMake(firstVideoAssetTrack.timeRange.duration, CMTimeAdd(firstVideoAssetTrack.timeRange.duration, secondVideoAssetTrack.timeRange.duration));

// Create the second layer instruction and associate it with the composition video track.

AVMutableVideoCompositionLayerInstruction *secondVideoLayerInstruction = [AVMutableVideoCompositionLayerInstruction videoCompositionLayerInstructionWithAssetTrack:mutableCompositionVideoTrack];

// Attach the first layer instruction to the first video composition instruction.

firstVideoCompositionInstruction.layerInstructions = @[firstVideoLayerInstruction];

// Attach the second layer instruction to the second video composition instruction.

secondVideoCompositionInstruction.layerInstructions = @[secondVideoLayerInstruction];

// Attach both of the video composition instructions to the video composition.

AVMutableVideoComposition *mutableVideoComposition = [AVMutableVideoComposition videoComposition];

mutableVideoComposition.instructions = @[firstVideoCompositionInstruction, secondVideoCompositionInstruction];Incorporating Core Animation Effects - 结合核心动画效果

A video composition can add the power of Core Animation to your composition through the animationTool property. Through this animation tool, you can accomplish tasks such as watermarking video and adding titles or animating overlays. Core Animation can be used in two different ways with video compositions: You can add a Core Animation layer as its own individual composition track, or you can render Core Animation effects (using a Core Animation layer) into the video frames in your composition directly. The following code displays the latter option by adding a watermark to the center of the video:

一个视频组件可以通过 animationTool 属性将核心动画的力量添加到你的组件中。通过这个动画制作工具,可以完成一些任务,例如视频水印,添加片头或者动画覆盖。核心动画可以有两种不同的方式被用于视频组件:可以添加一个核心动画层到自己的个人组件轨道,或者可以渲染核心动画效果(使用一个核心动画层)直接进入组件的视频框架。下面的代码展示了在视频中央添加一个水印显示出来的效果。

CALayer *watermarkLayer = <#CALayer representing your desired watermark image#>;

CALayer *parentLayer = [CALayer layer];

CALayer *videoLayer = [CALayer layer];

parentLayer.frame = CGRectMake(0, 0, mutableVideoComposition.renderSize.width, mutableVideoComposition.renderSize.height);

videoLayer.frame = CGRectMake(0, 0, mutableVideoComposition.renderSize.width, mutableVideoComposition.renderSize.height);

[parentLayer addSublayer:videoLayer];

watermarkLayer.position = CGPointMake(mutableVideoComposition.renderSize.width/2, mutableVideoComposition.renderSize.height/4);

[parentLayer addSublayer:watermarkLayer];

mutableVideoComposition.animationTool = [AVVideoCompositionCoreAnimationTool videoCompositionCoreAnimationToolWithPostProcessingAsVideoLayer:videoLayer inLayer:parentLayer];Putting It All Together: Combining Multiple Assets and Saving the Result to the Camera Roll -

This brief code example illustrates how you can combine two video asset tracks and an audio asset track to create a single video file. It shows how to:

- Create an AVMutableComposition object and add multiple AVMutableCompositionTrack objects

- Add time ranges of AVAssetTrack objects to compatible composition tracks

- Check the preferredTransform property of a video asset track to determine the video’s orientation

- Use AVMutableVideoCompositionLayerInstruction objects to apply transforms to the video tracks within - a composition

- Set appropriate values for the renderSize and frameDuration properties of a video composition

- Use a composition in conjunction with a video composition when exporting to a video file

- Save a video file to the Camera Roll

这个简短的代码示例说明了如何将两个视频资产轨道和一个音频资产轨道结合起来,创建一个单独的视频文件。有下面几个方面:

- 创建一个 AVMutableComposition 对象并且添加多个 AVMutableCompositionTrack 对象

- 添加 AVAssetTrack 对象的时间范围,兼容组件轨道

- 检查视频资产轨道的 preferredTransform 的属性,决定视频的方向

- 使用 AVMutableVideoCompositionLayerInstruction 对象给组件内的视频轨道应用转换。

- 给视频组件的 renderSize 和 frameDuration 属性设置适当的值。

- 当导出视频文件时,使用一个视频组件组合物中的组件

- 保存视频文件到相机胶卷

Note: To focus on the most relevant code, this example omits several aspects of a complete app, such as memory management and error handling. To use AVFoundation, you are expected to have enough experience with Cocoa to infer the missing pieces.

注意:关注最相关的代码,这个例子省略了一个完整应用程序的几个方面,如内存处理和错误处理。利用

AVFoundation,希望你有足够的使用Cocoa的经验去判断丢失的碎片

Creating the Composition - 创建组件

To piece together tracks from separate assets, you use an AVMutableComposition object. Create the composition and add one audio and one video track.

使用 AVMutableComposition 对象将分离的资产拼凑成轨道。创建组件并且添加一个音频轨道和一个视频轨道。

AVMutableComposition *mutableComposition = [AVMutableComposition composition];

AVMutableCompositionTrack *videoCompositionTrack = [mutableComposition addMutableTrackWithMediaType:AVMediaTypeVideo preferredTrackID:kCMPersistentTrackID_Invalid];

AVMutableCompositionTrack *audioCompositionTrack = [mutableComposition addMutableTrackWithMediaType:AVMediaTypeAudio preferredTrackID:kCMPersistentTrackID_Invalid];Adding the Assets - 添加资产

An empty composition does you no good. Add the two video asset tracks and the audio asset track to the composition.

一个空的资产并不是好。往组件中添加两个视频资产轨道和音频资产轨道。